Changelog

Changelog

Faster defaults for Vercel Function CPU and memory

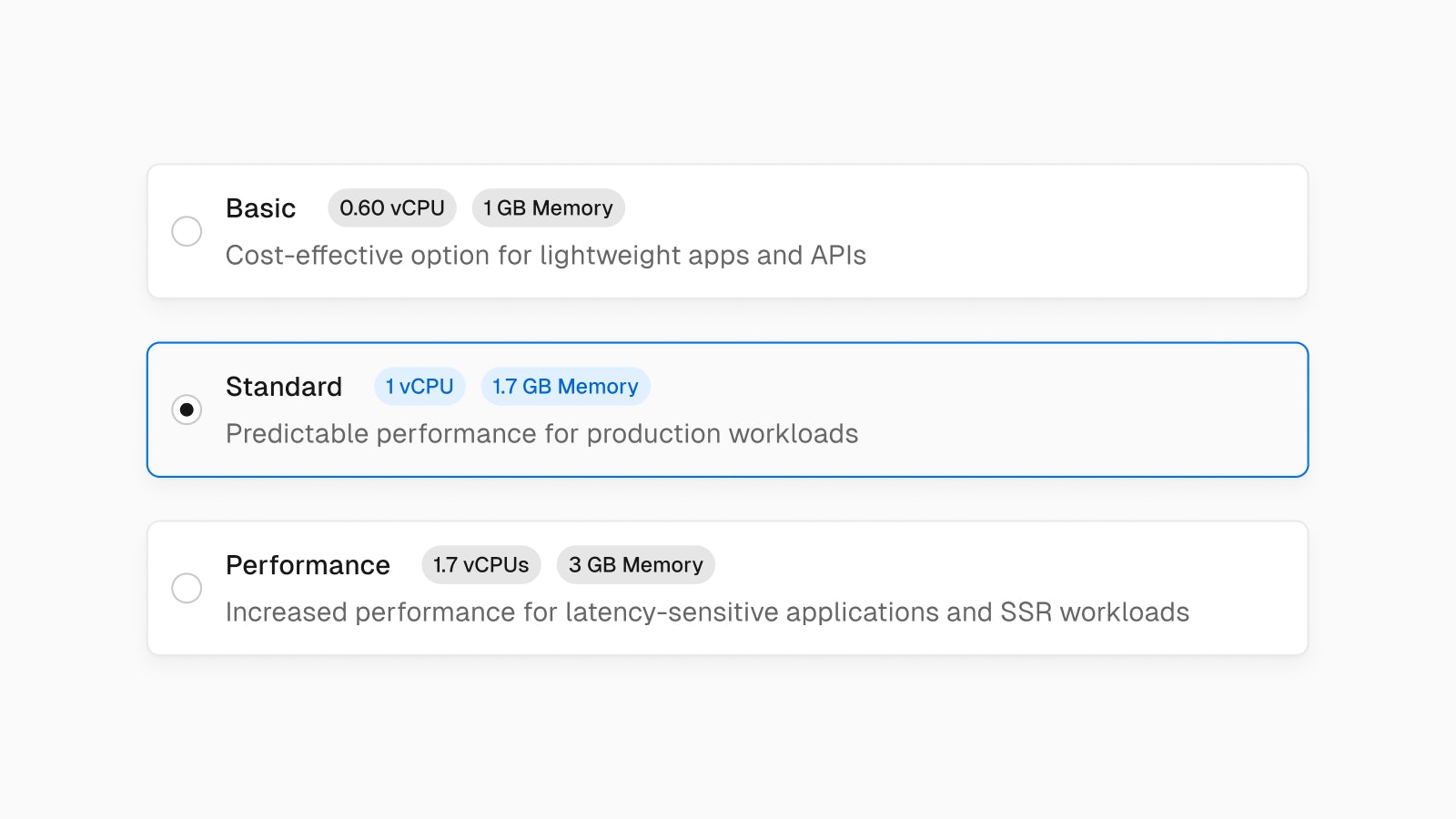

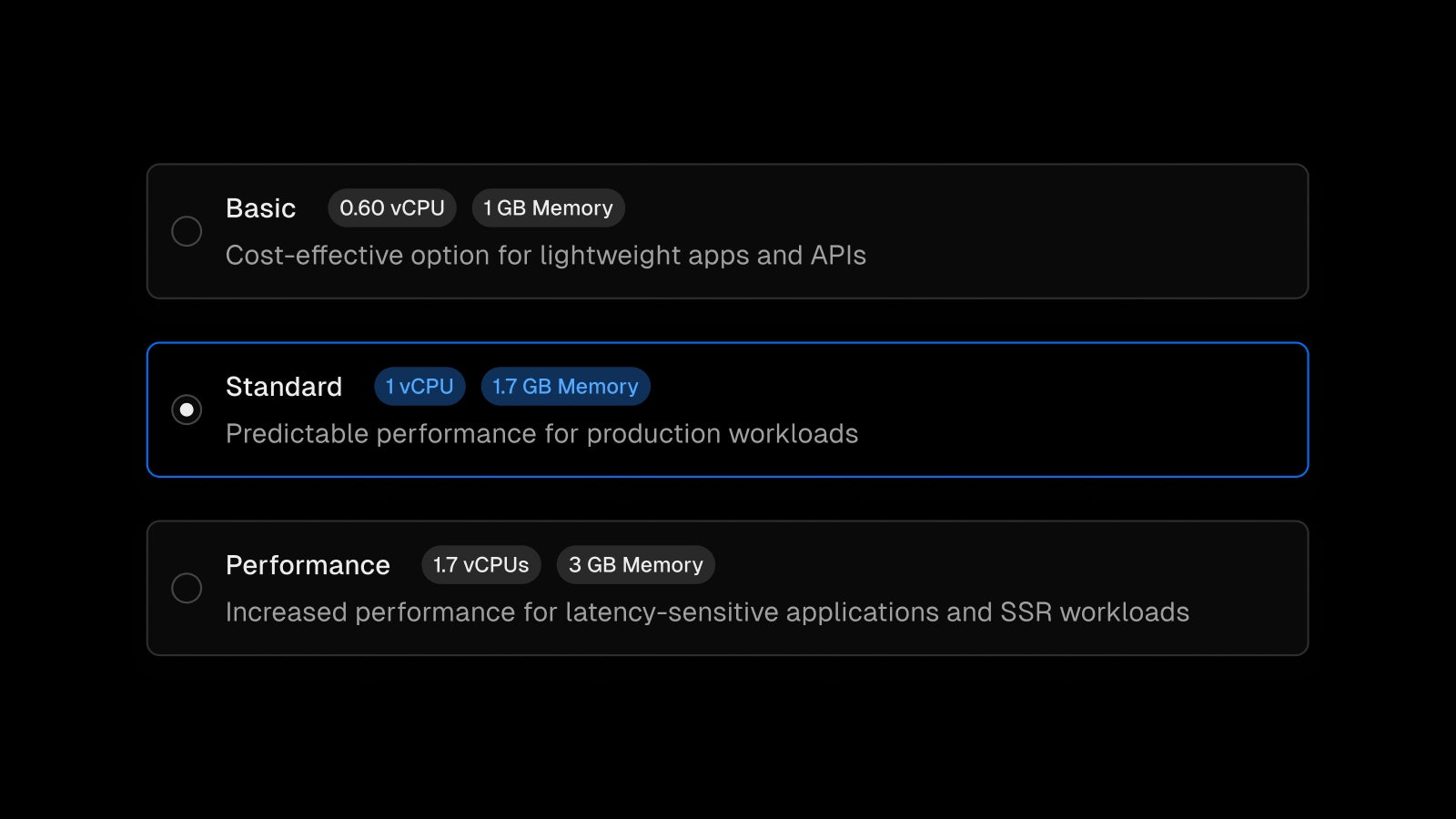

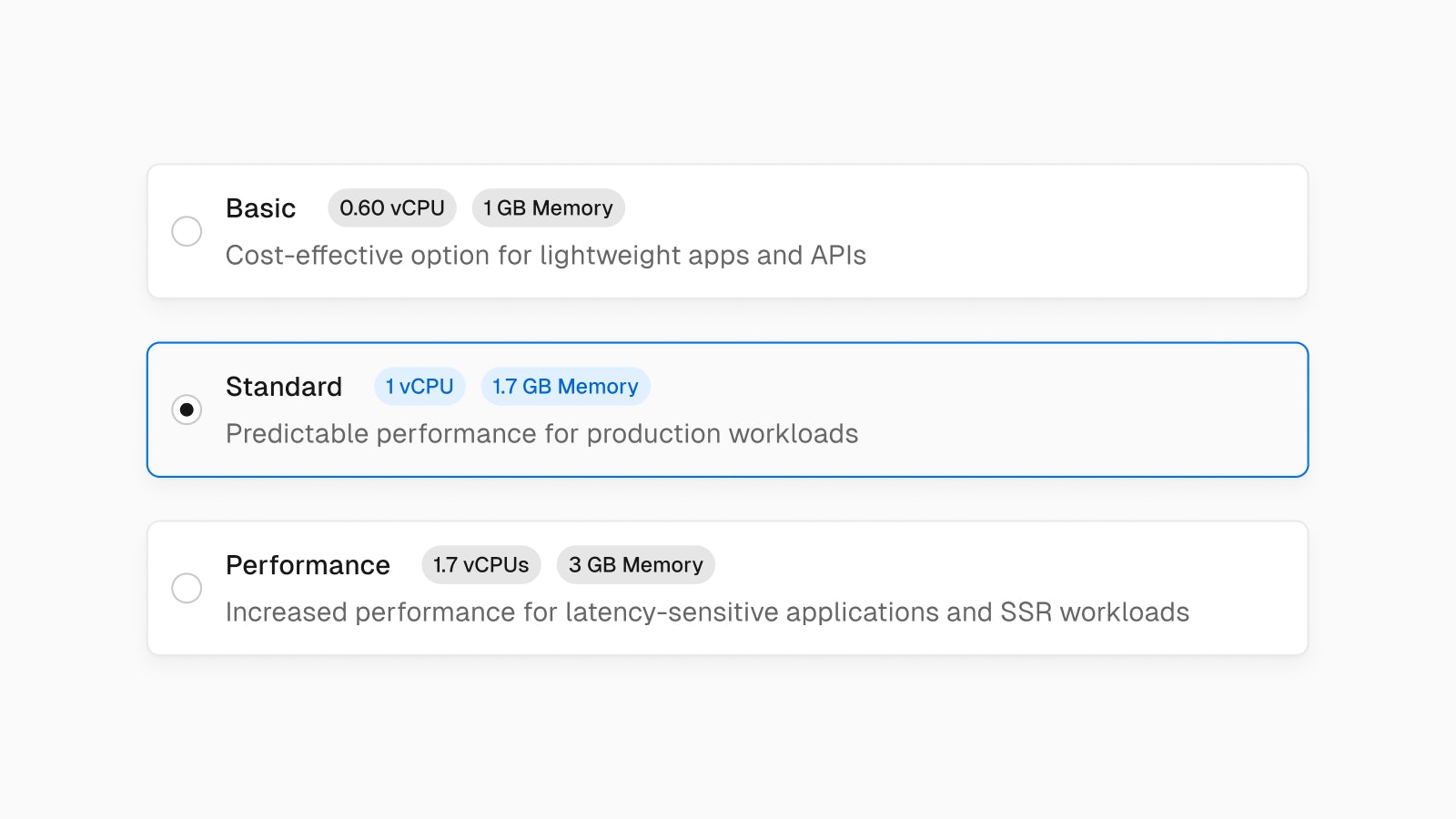

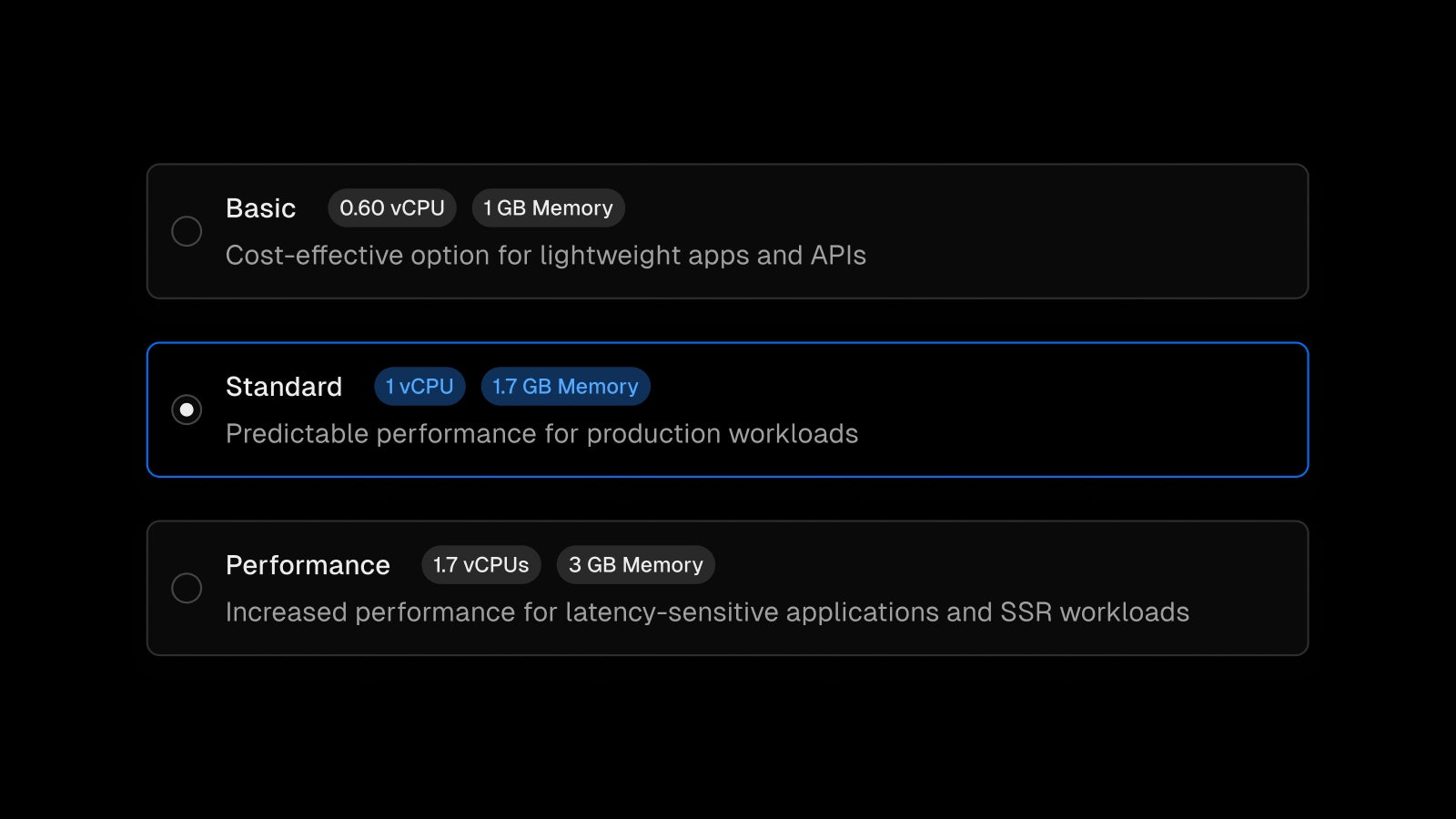

The default CPU for Vercel Functions will change from Basic (0.6 vCPU/1GB Memory) to Standard (1 vCPU/1.7GB Memory) for new projects created after May 6th, 2024. Existing projects will remain unchanged unless manually updated.

This change helps ensure consistent function performance and faster startup times. Depending on your function code size, this may reduce cold starts by a few hundred milliseconds.

While increasing the function CPU can increase costs for the same duration, it can also make functions execute faster. If functions execute faster, you incur less overall function duration usage. This is especially important if your function runs CPU-intensive tasks.

This change will be applied to all paid plan customers (Pro and Enterprise), no action required.

Check out our documentation to learn more.

Improved infrastructure pricing is now active for new customers

Earlier this month, we announced our improved infrastructure pricing, which is active for new customers starting today.

Billing for existing customers begins between June 25 and July 24. For more details, please reference the email with next steps sent to your account. Existing Enterprise contracts are unaffected.

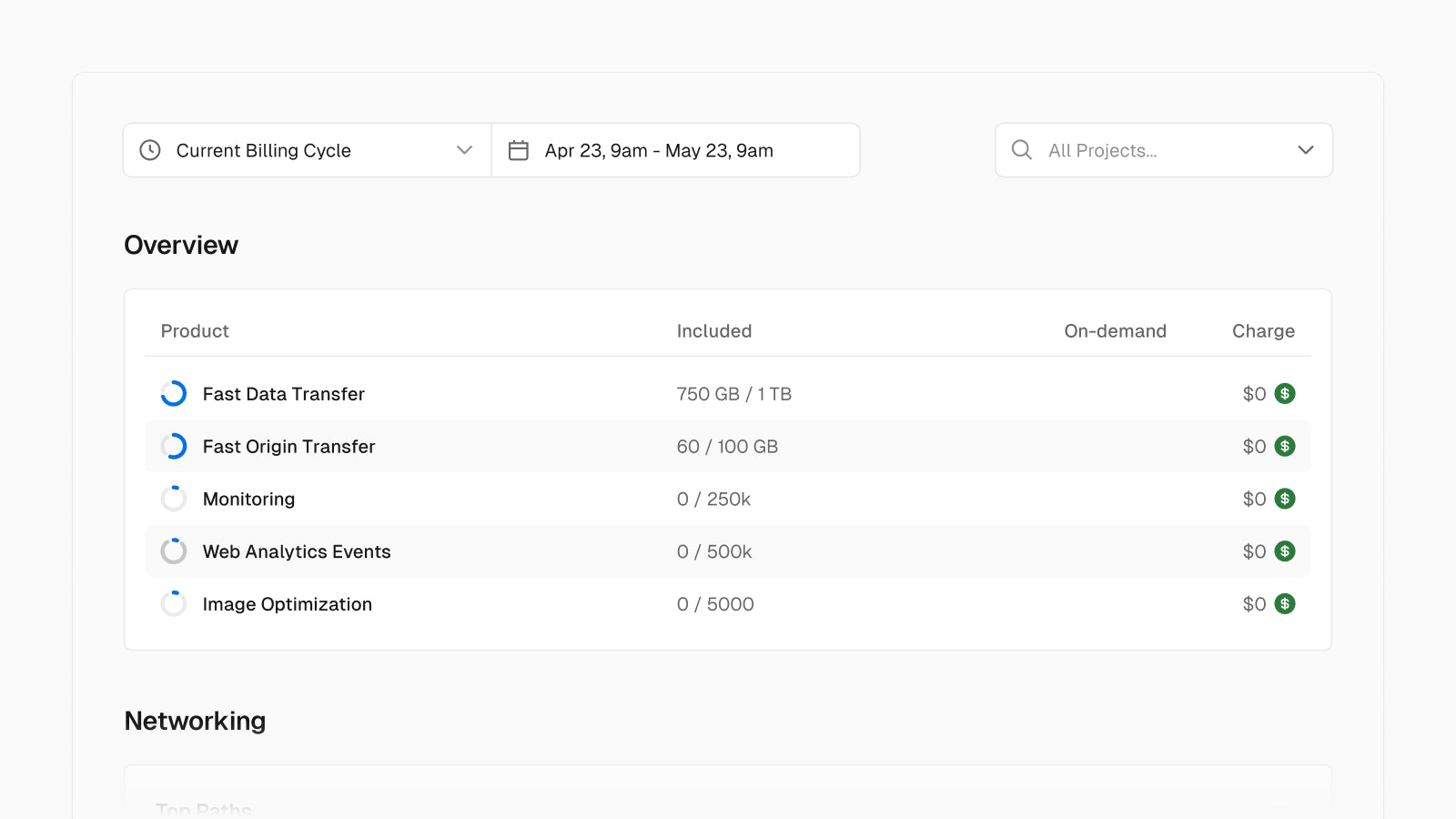

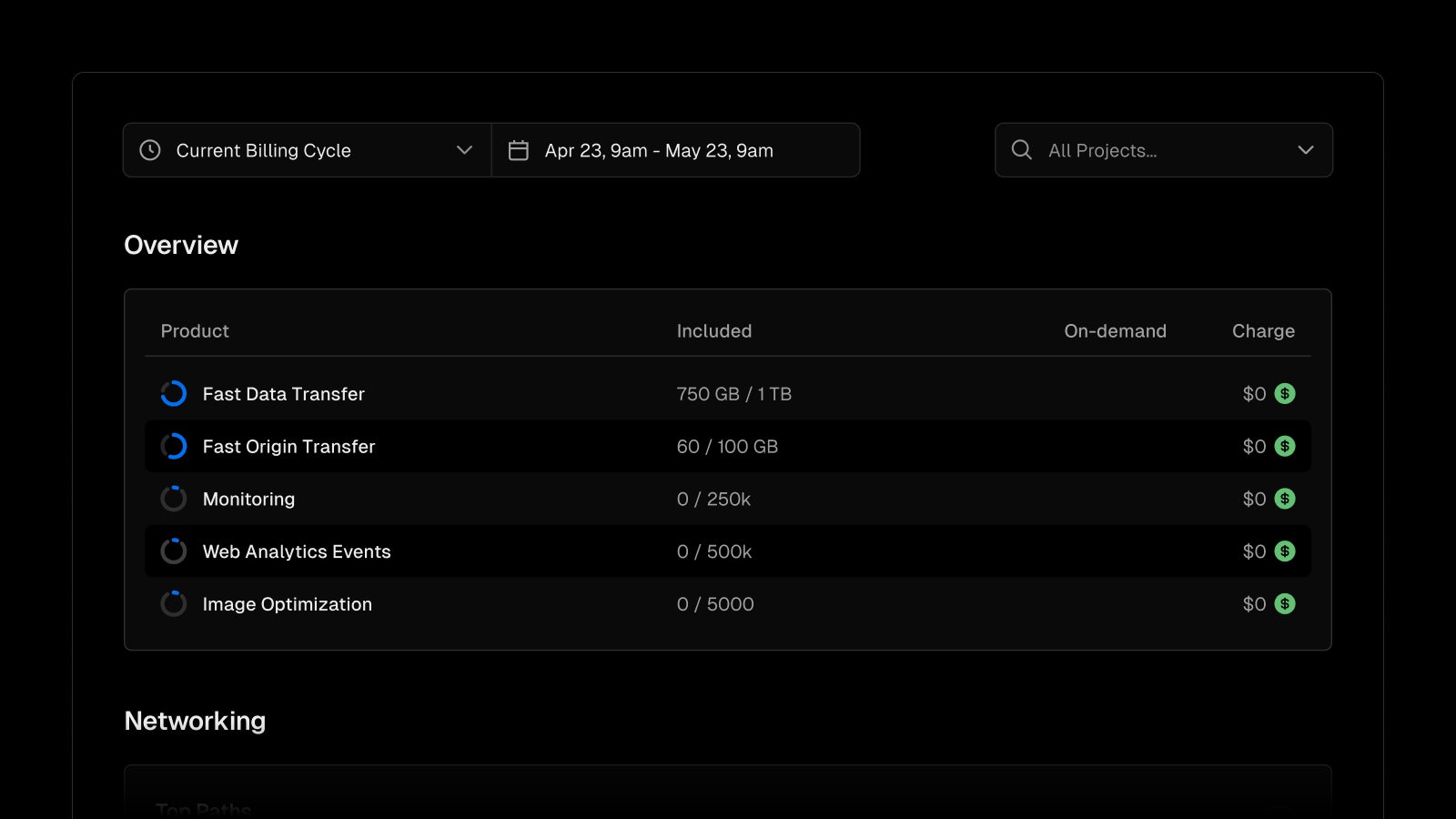

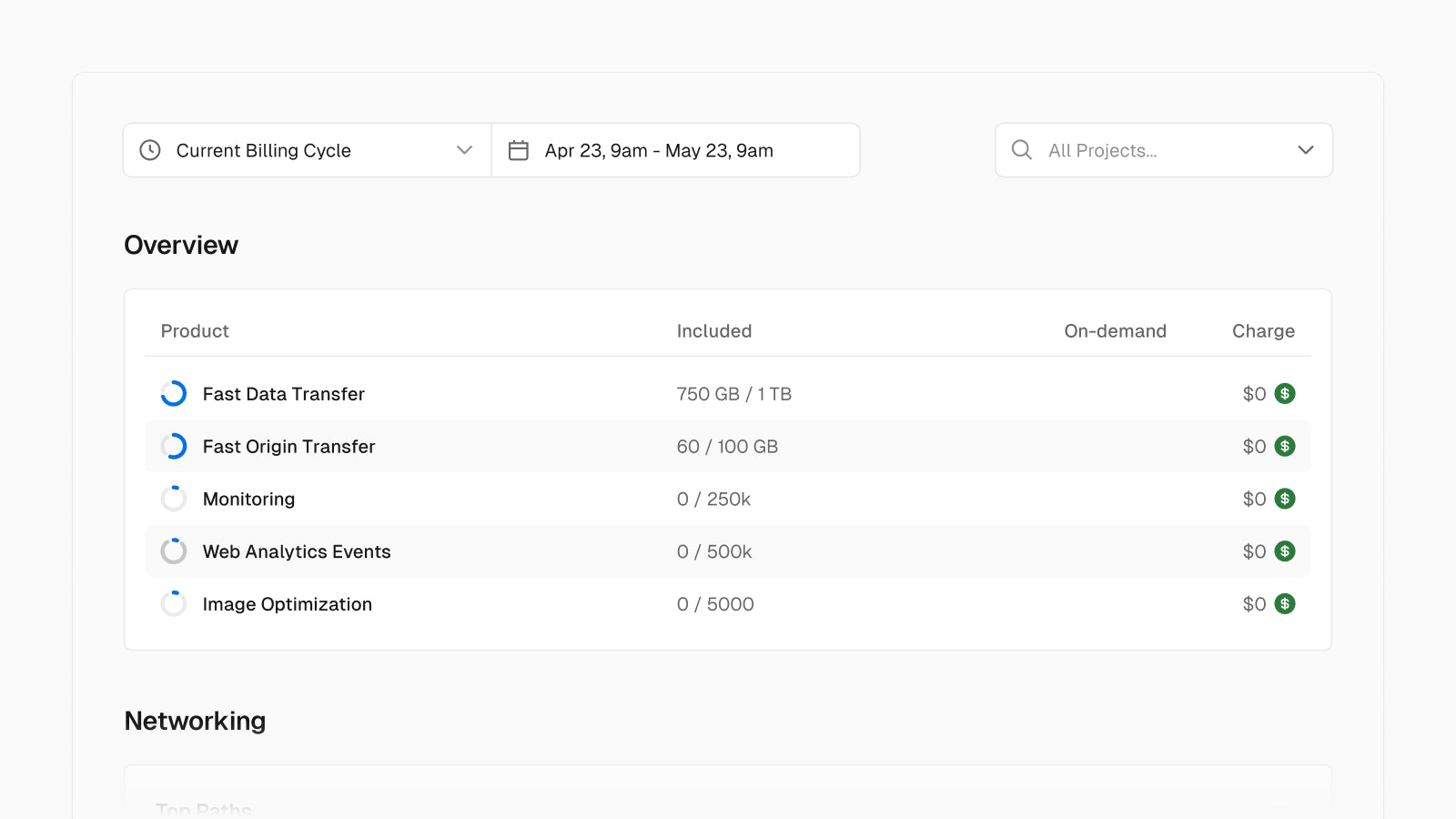

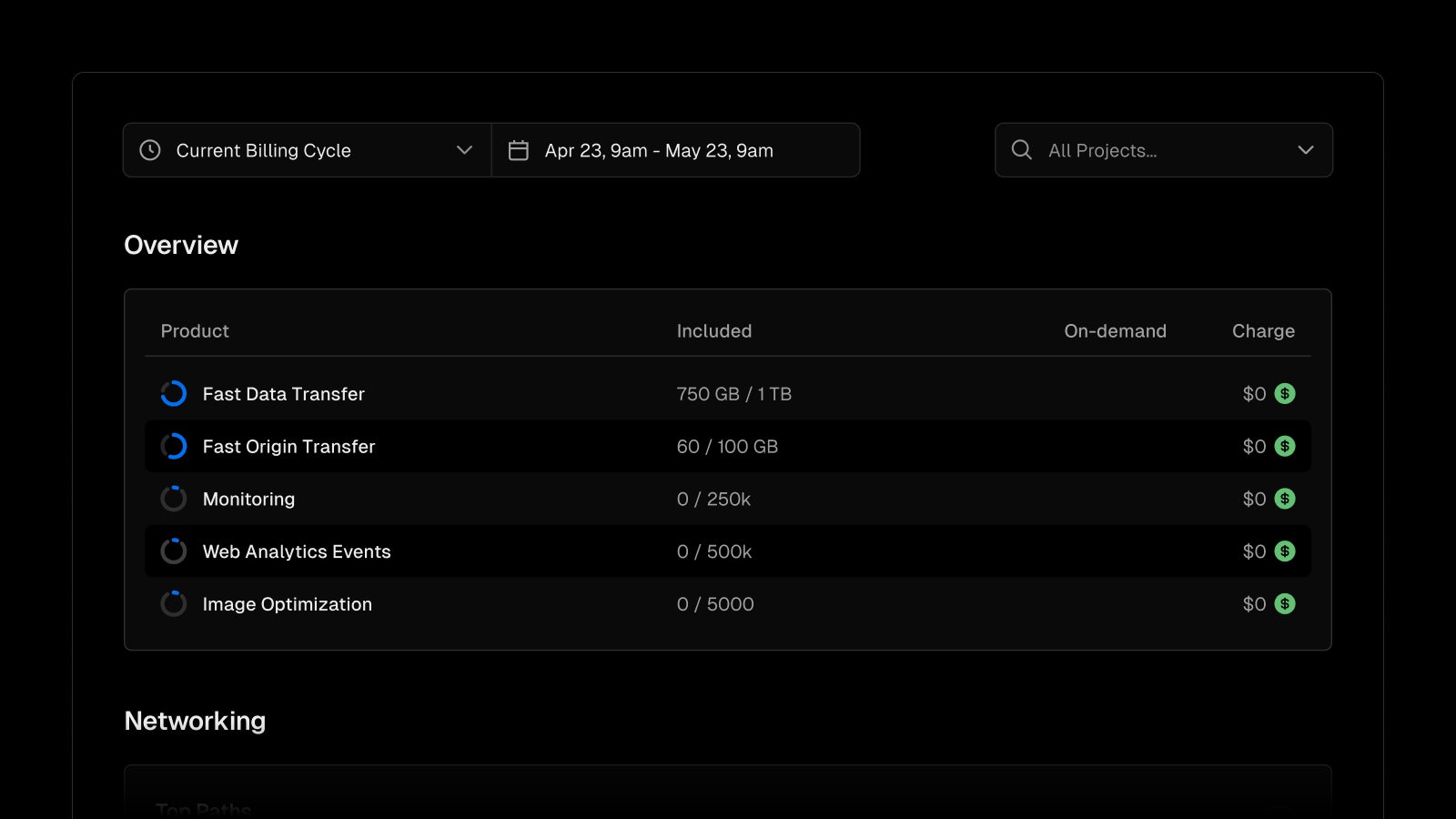

Our previous combined metrics (bandwidth and functions) are now more granular, and have reduced base prices. These new metrics can be viewed and optimized from our improved Usage page.

These pricing improvements build on recent platform features to help automatically prevent runaway spend, including hard spend limits, recursion protection, improved function defaults, Attack Challenge Mode, and more.

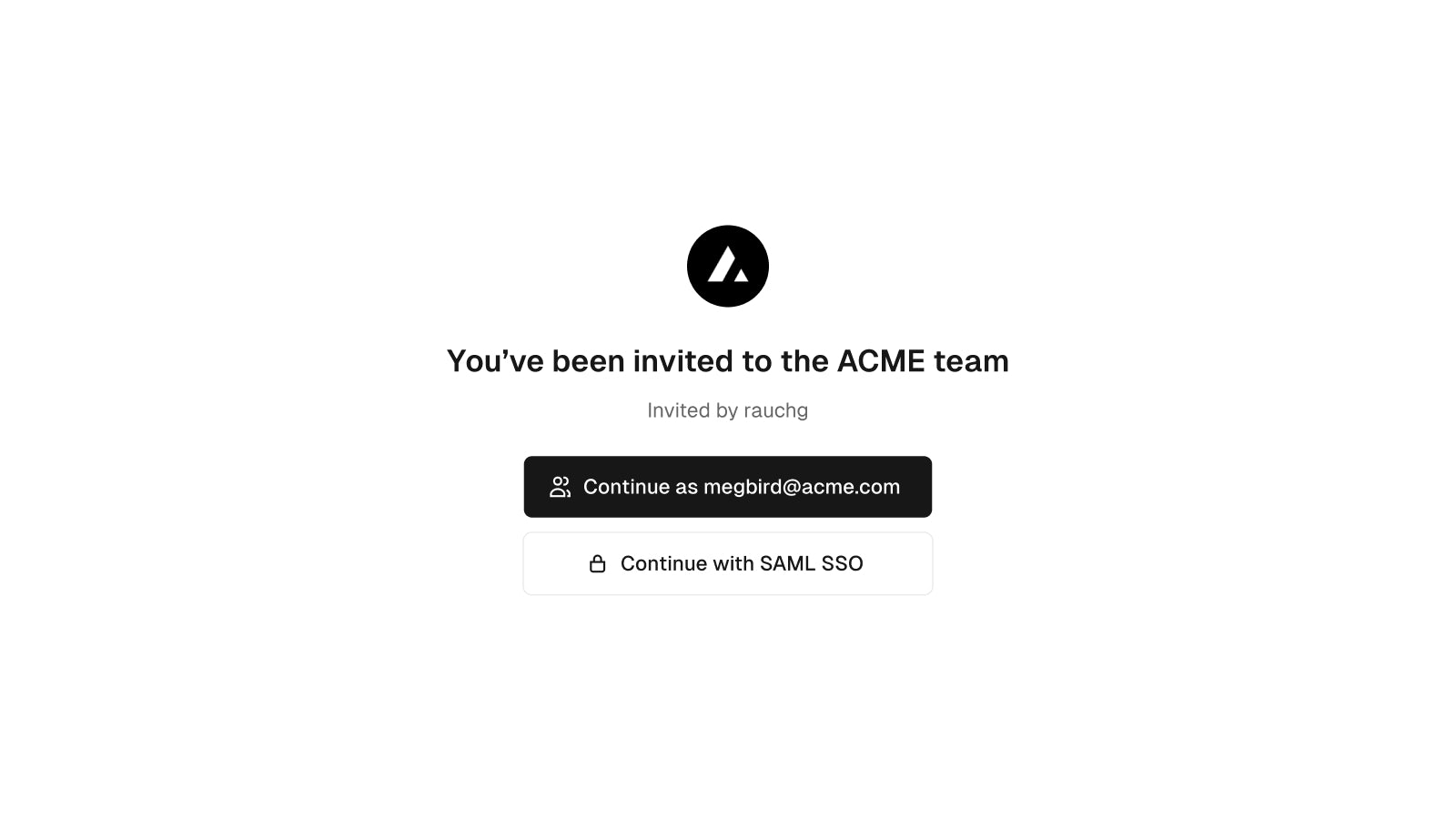

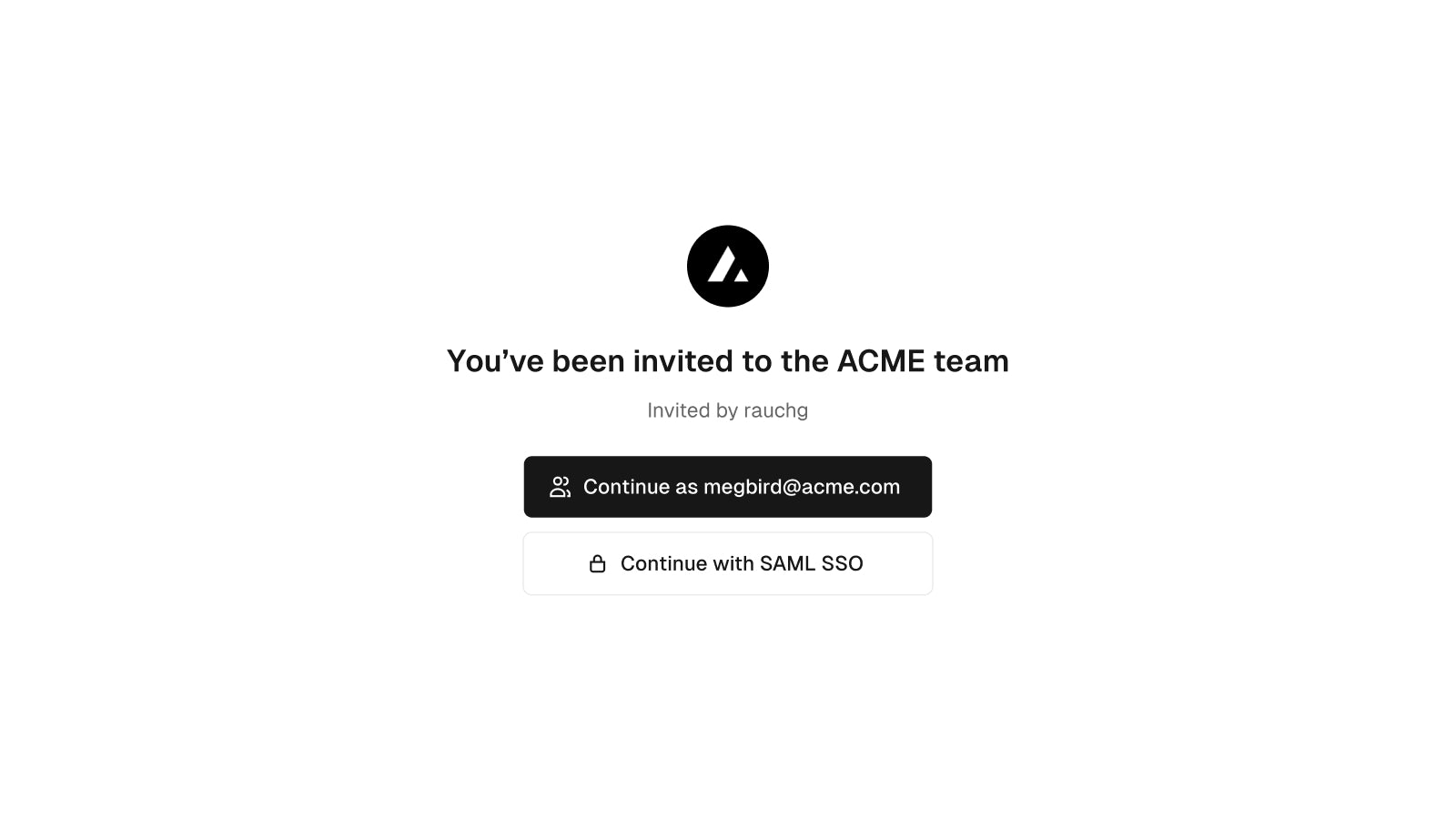

Improved team onboarding experience

It’s now easier to join a team on Vercel. New team members no longer need to re-enter their email, or create a Hobby team or Pro trial. Team invite emails now lead to a sign up page customized for the team. This includes simplified sign up options that reflect the team's SSO settings.

You can invite new team members under "Members" in your team settings. Learn more about managing team members in the documentation.

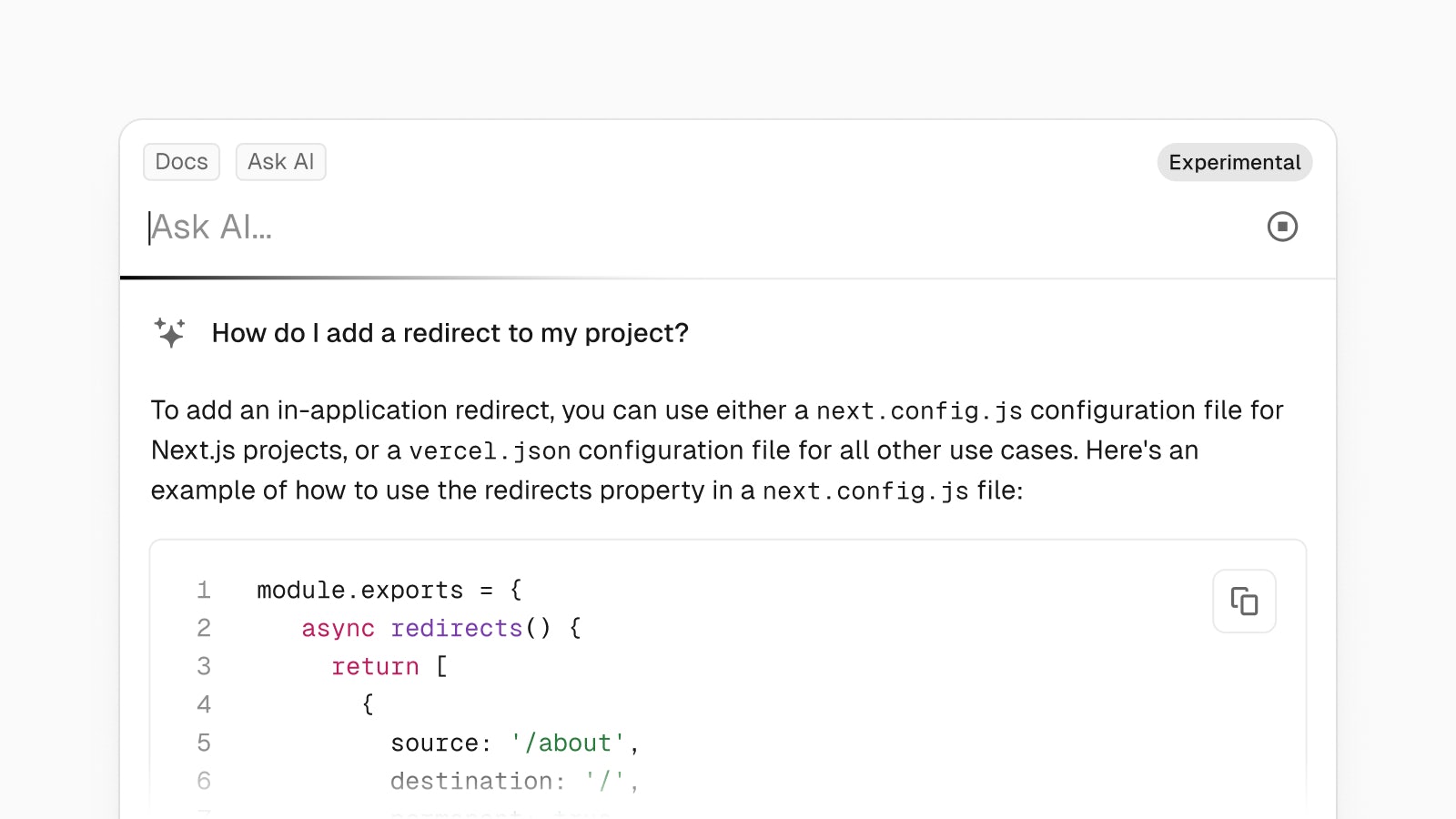

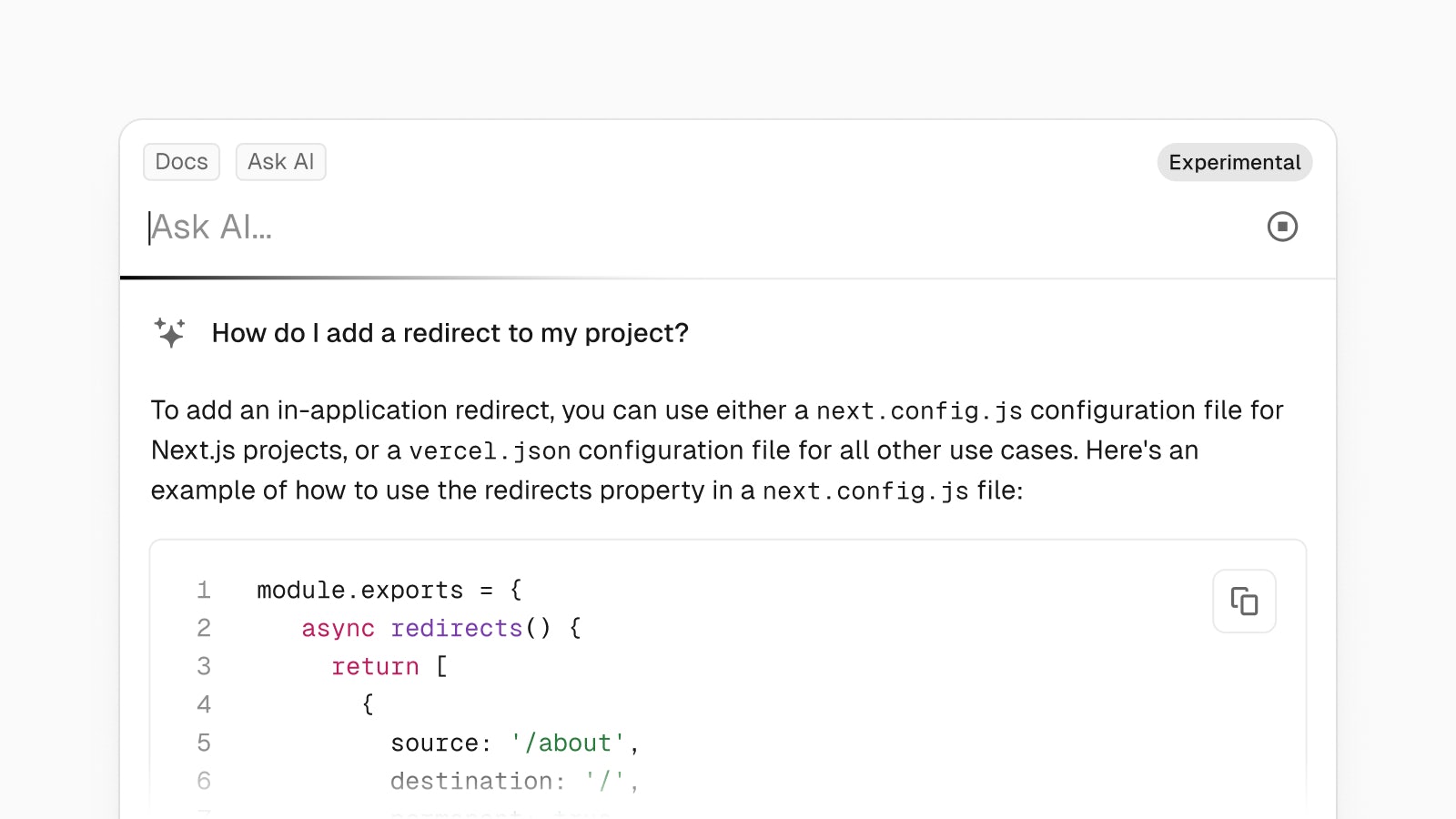

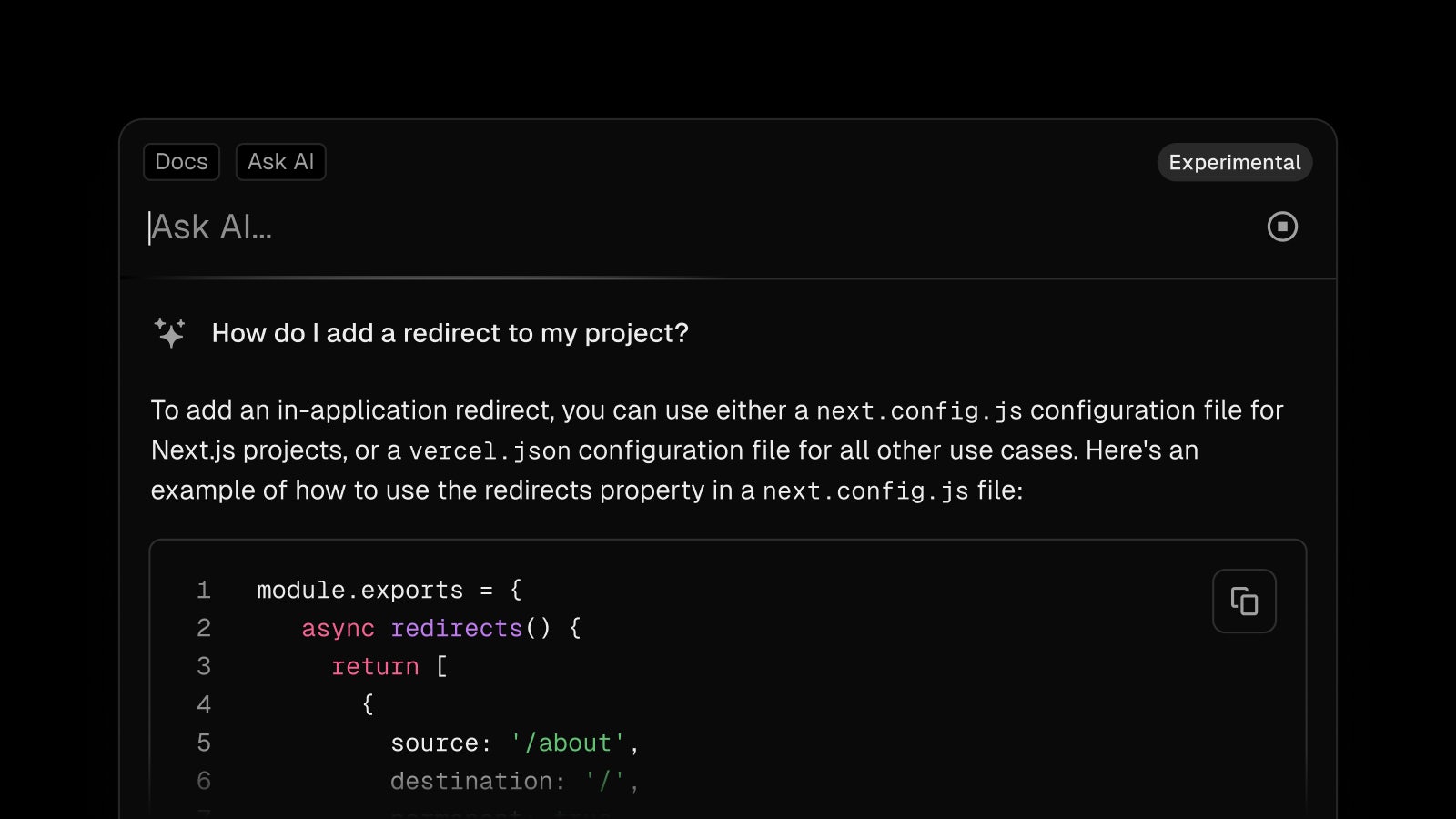

AI-enhanced search for Vercel documentation

You can now get AI-assisted answers to your questions directly from the Vercel docs search:

- Use natural language to ask questions about the docs

- View recent search queries and continue conversations

- Easily copy code and markdown output

- Leave feedback to help us improve the quality of responses

Start searching with ⌘K (or Ctrl+K on Windows) menu on vercel.com/docs.

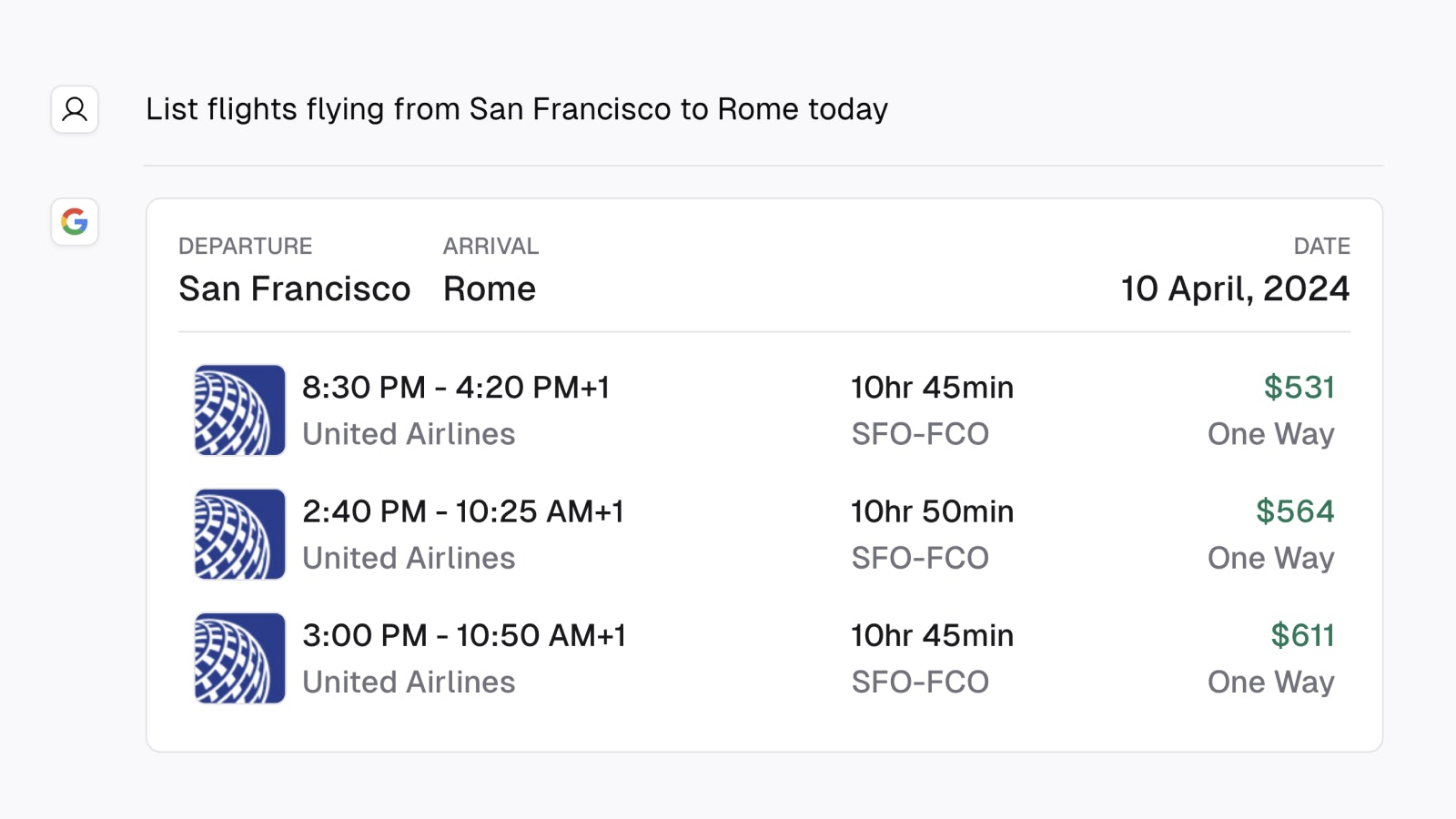

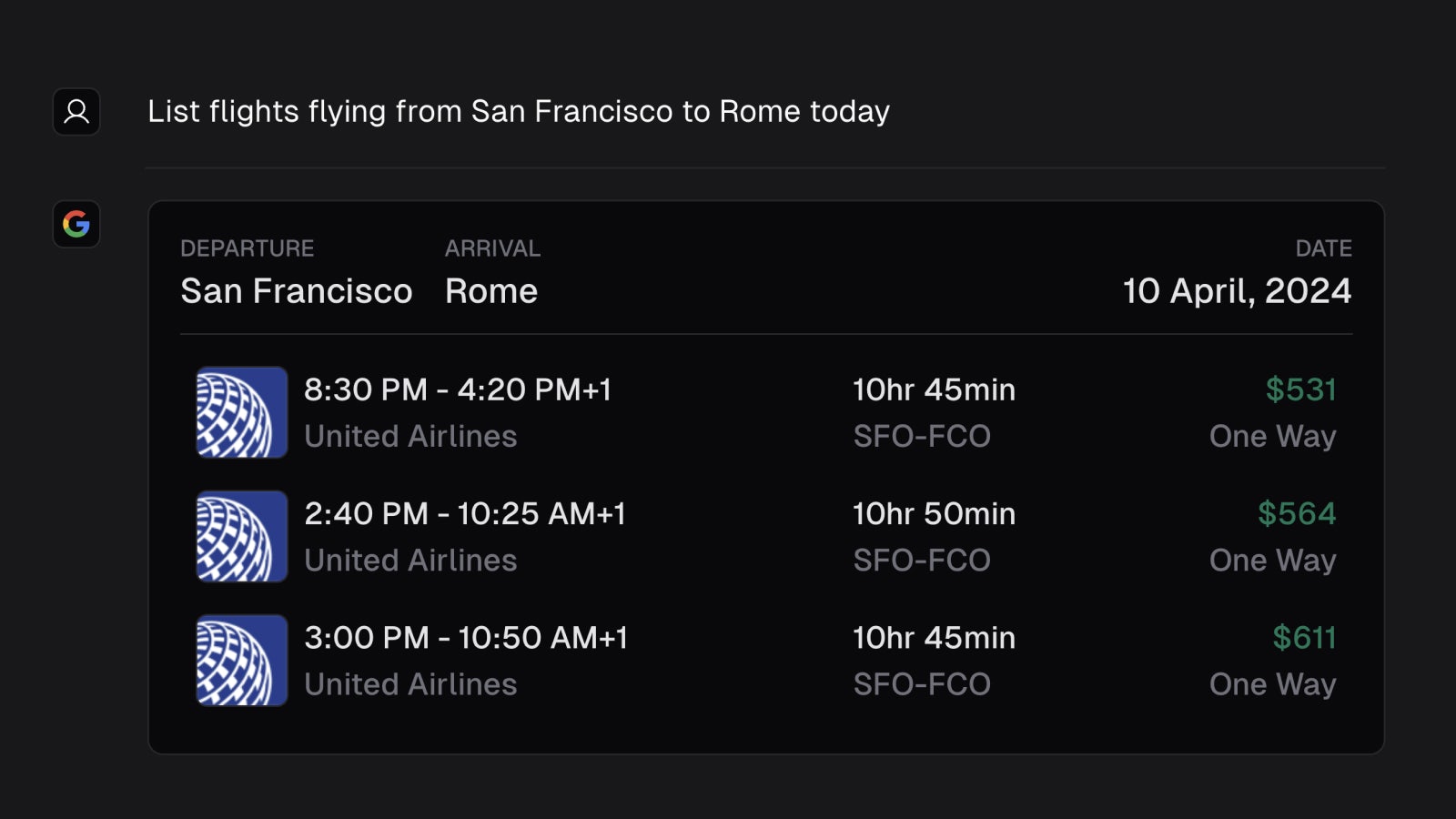

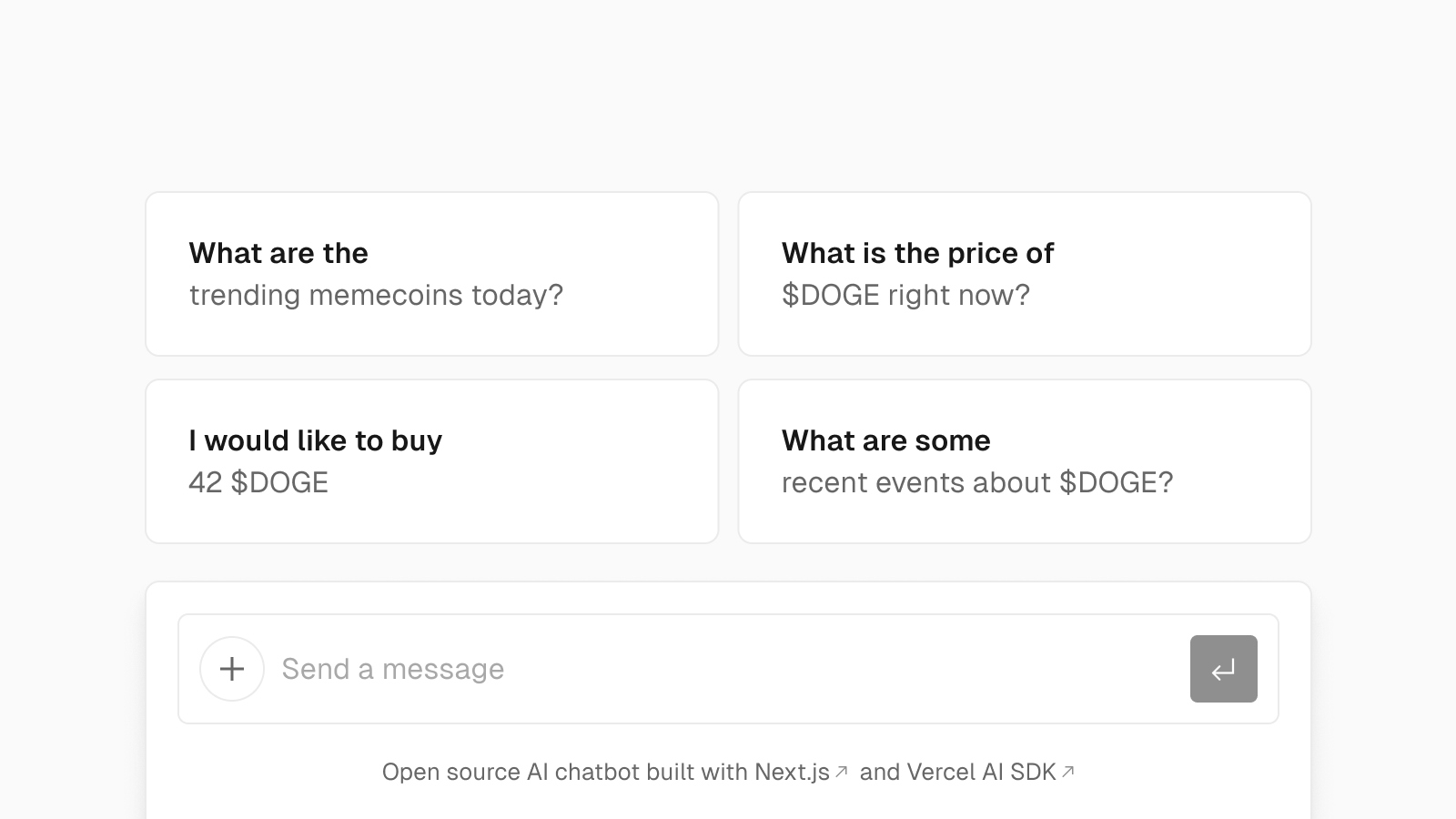

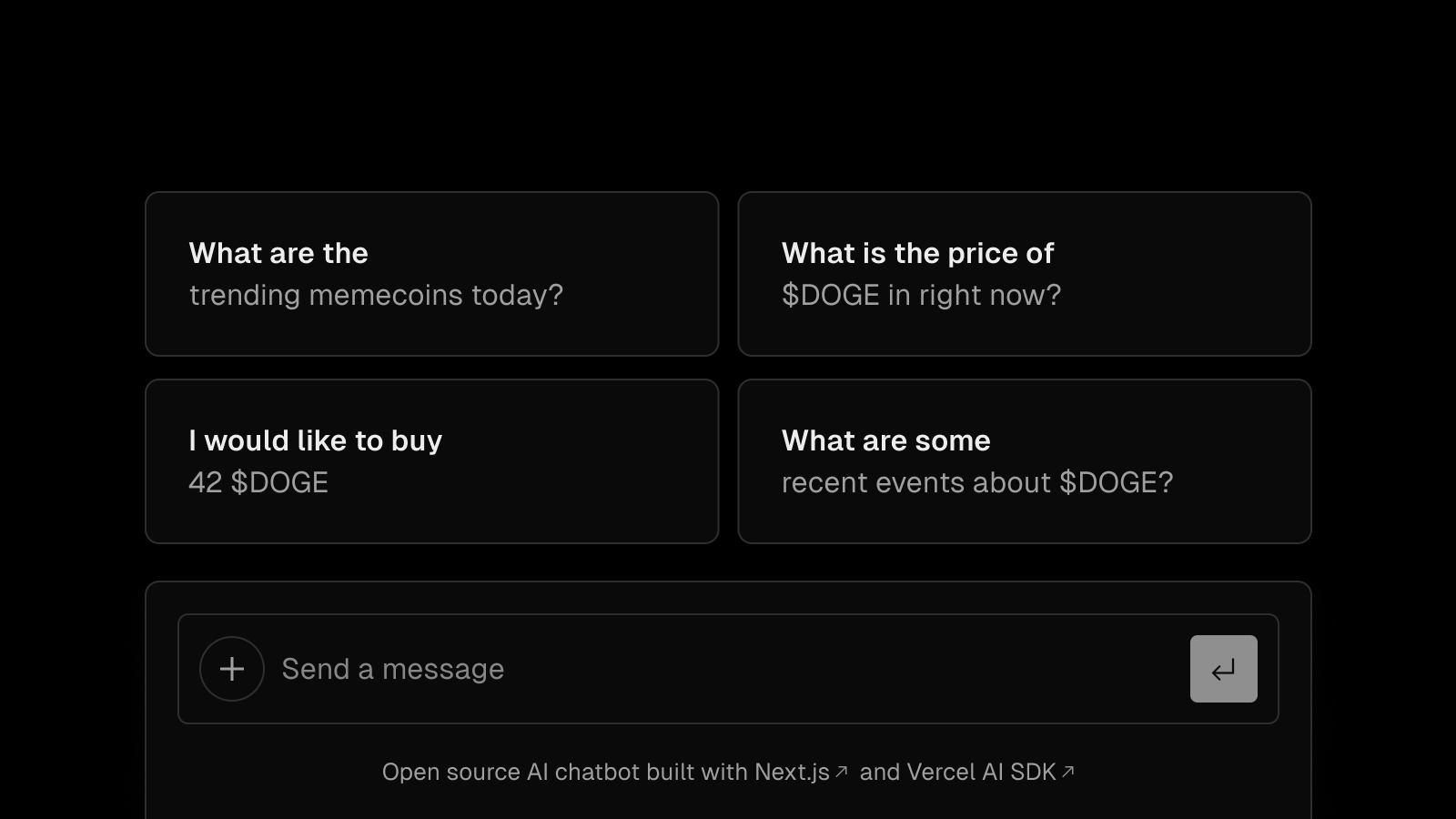

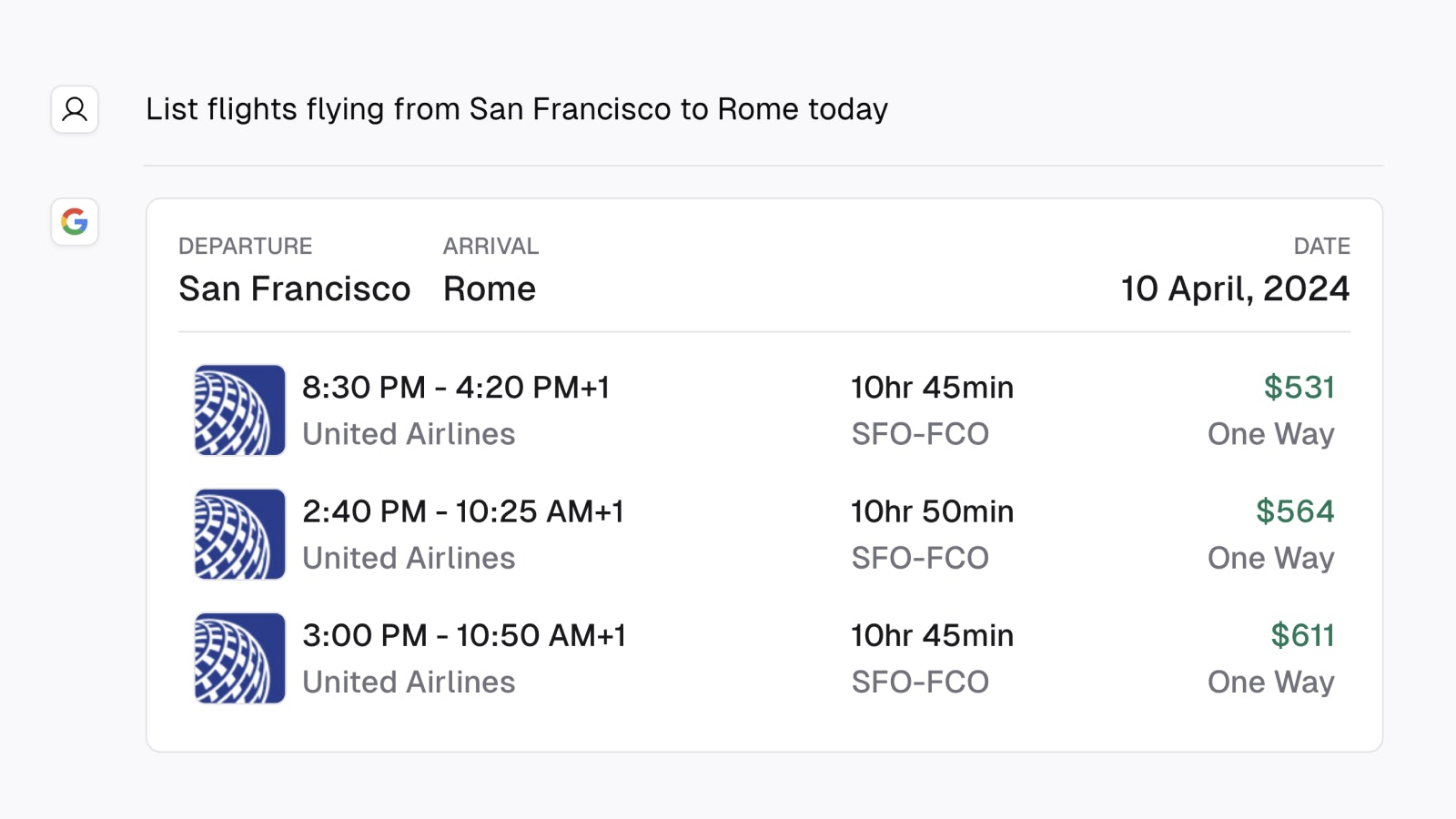

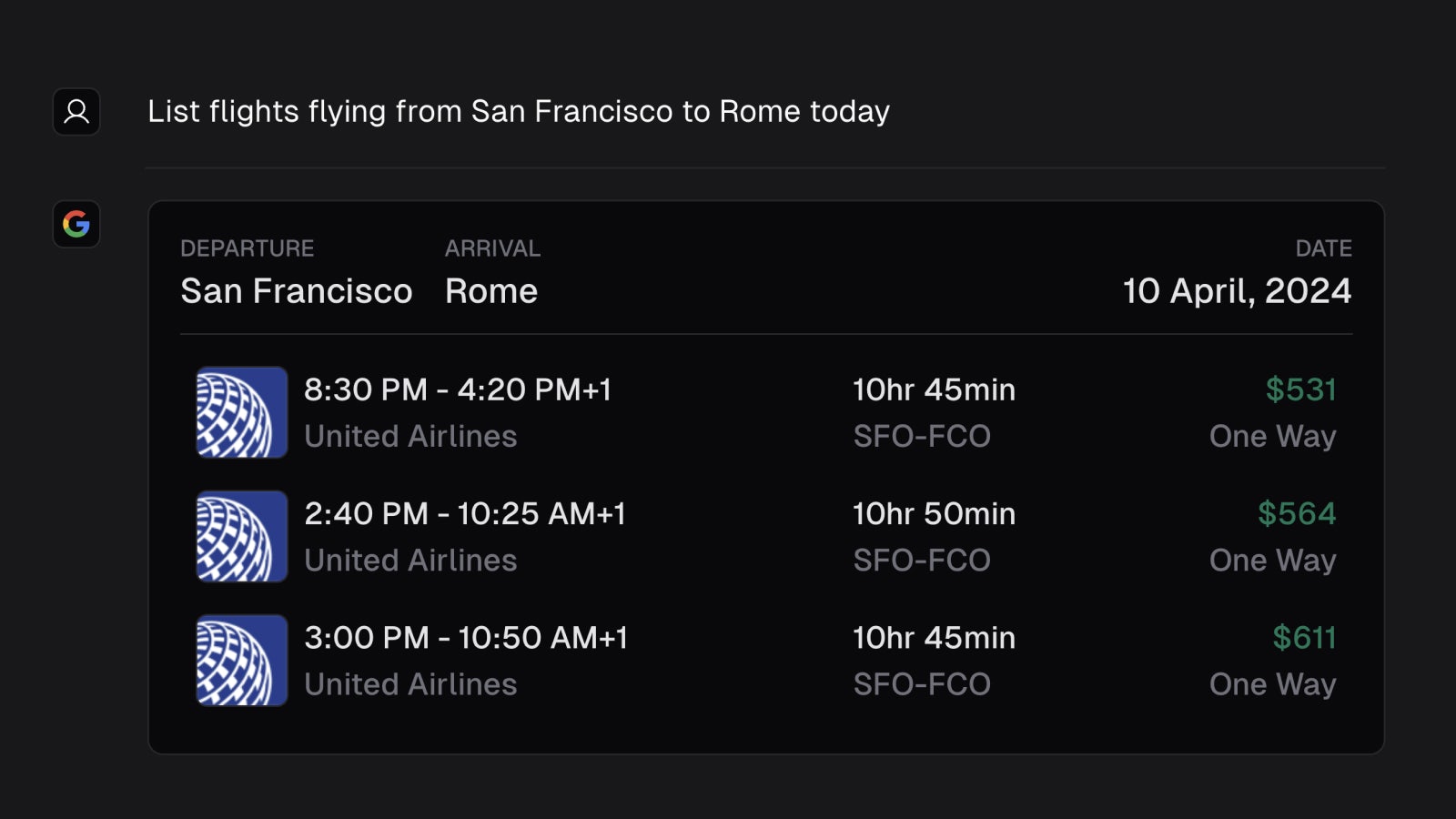

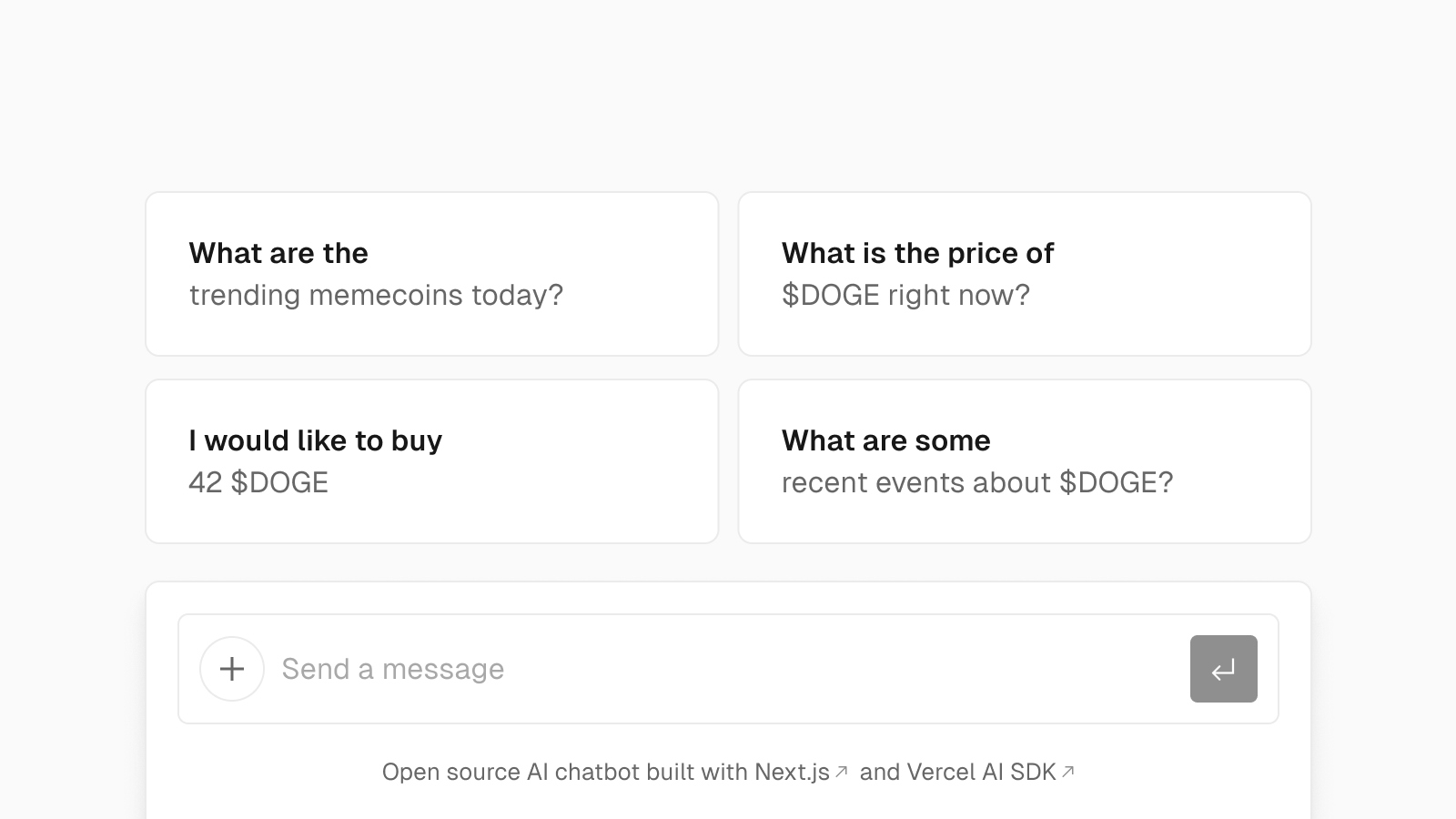

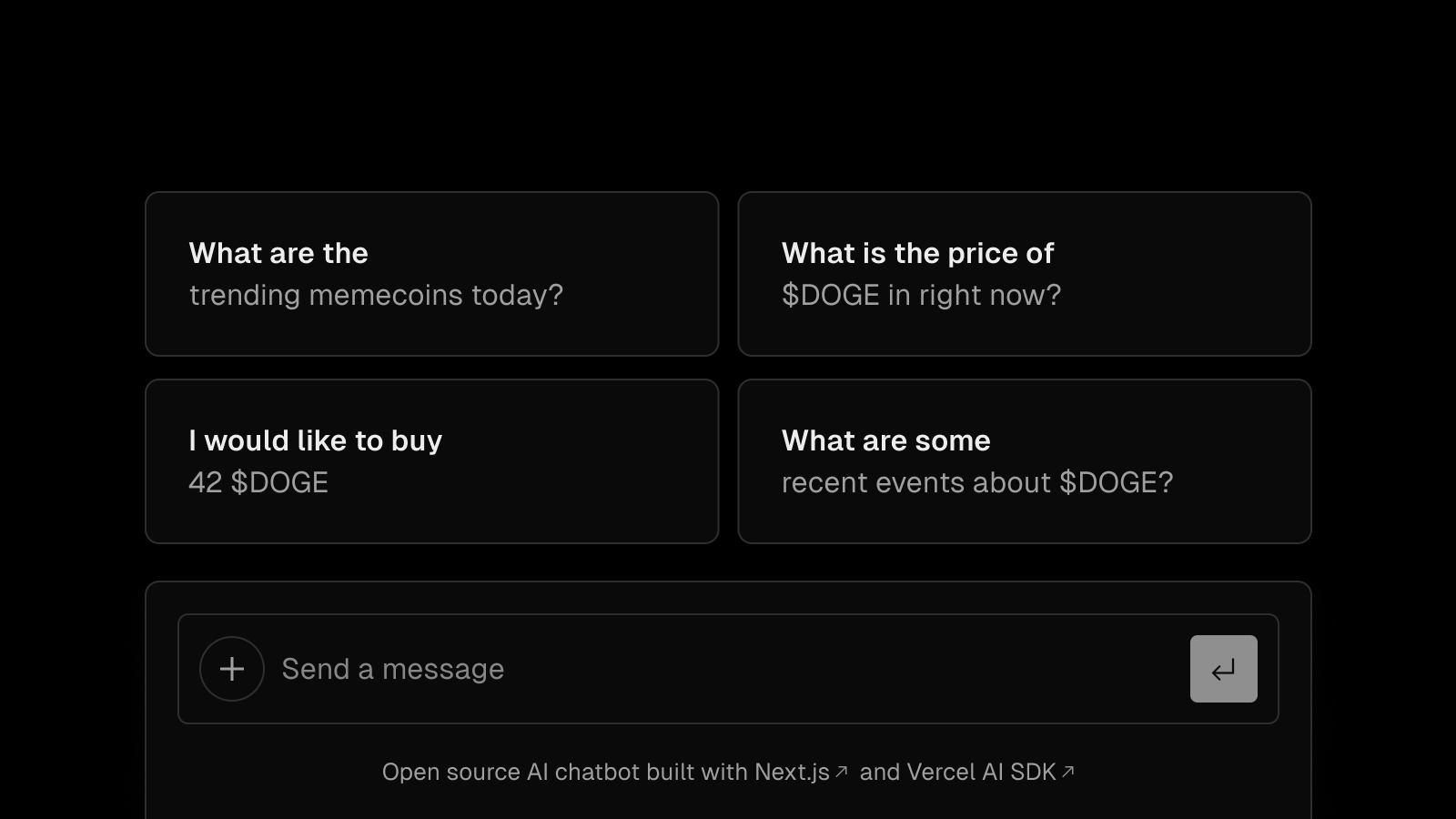

Gemini AI Chatbot with Generative UI support

The Gemini AI Chatbot template is a streaming-enabled, Generative UI starter application. It's built with the Vercel AI SDK, Next.js App Router, and React Server Components & Server Actions.

This template features persistent chat history, rate limiting to prevent abuse, session storage, user authentication, and more.

The Gemini model used is models/gemini-1.0-pro-001, however, the Vercel AI SDK enables exploring an LLM provider (like OpenAI, Anthropic, Cohere, Hugging Face, or using LangChain) with just a few lines of code.

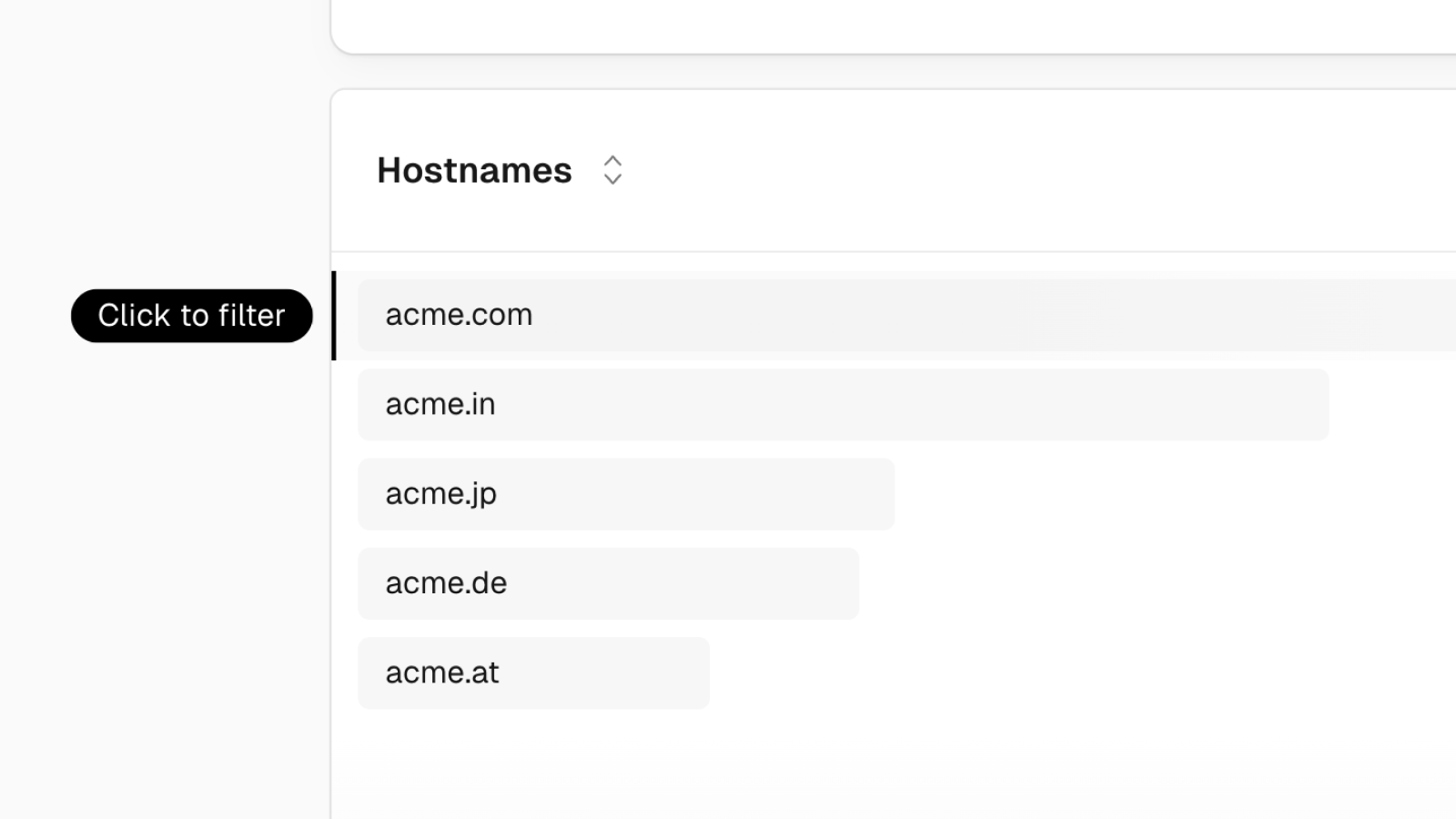

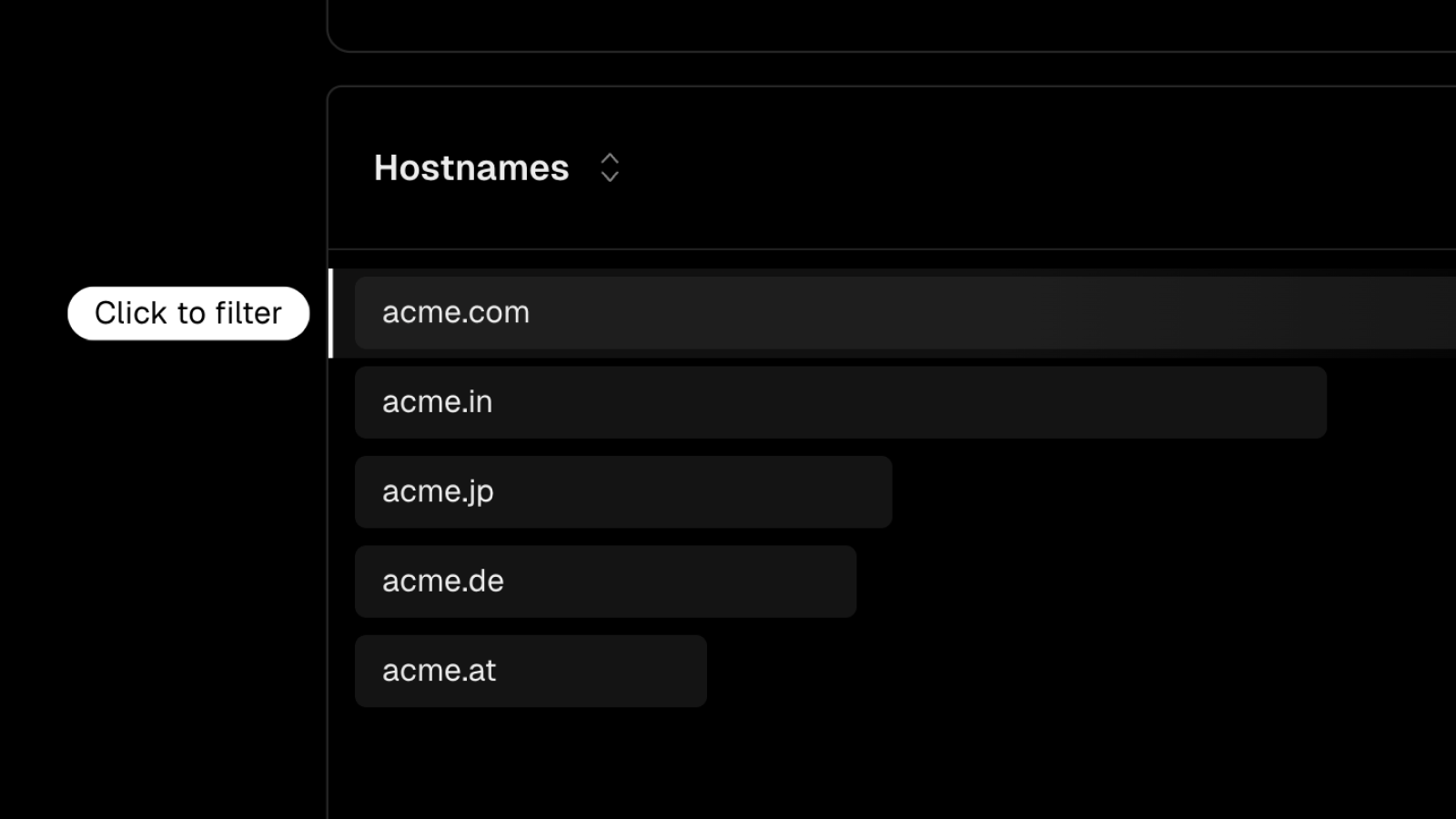

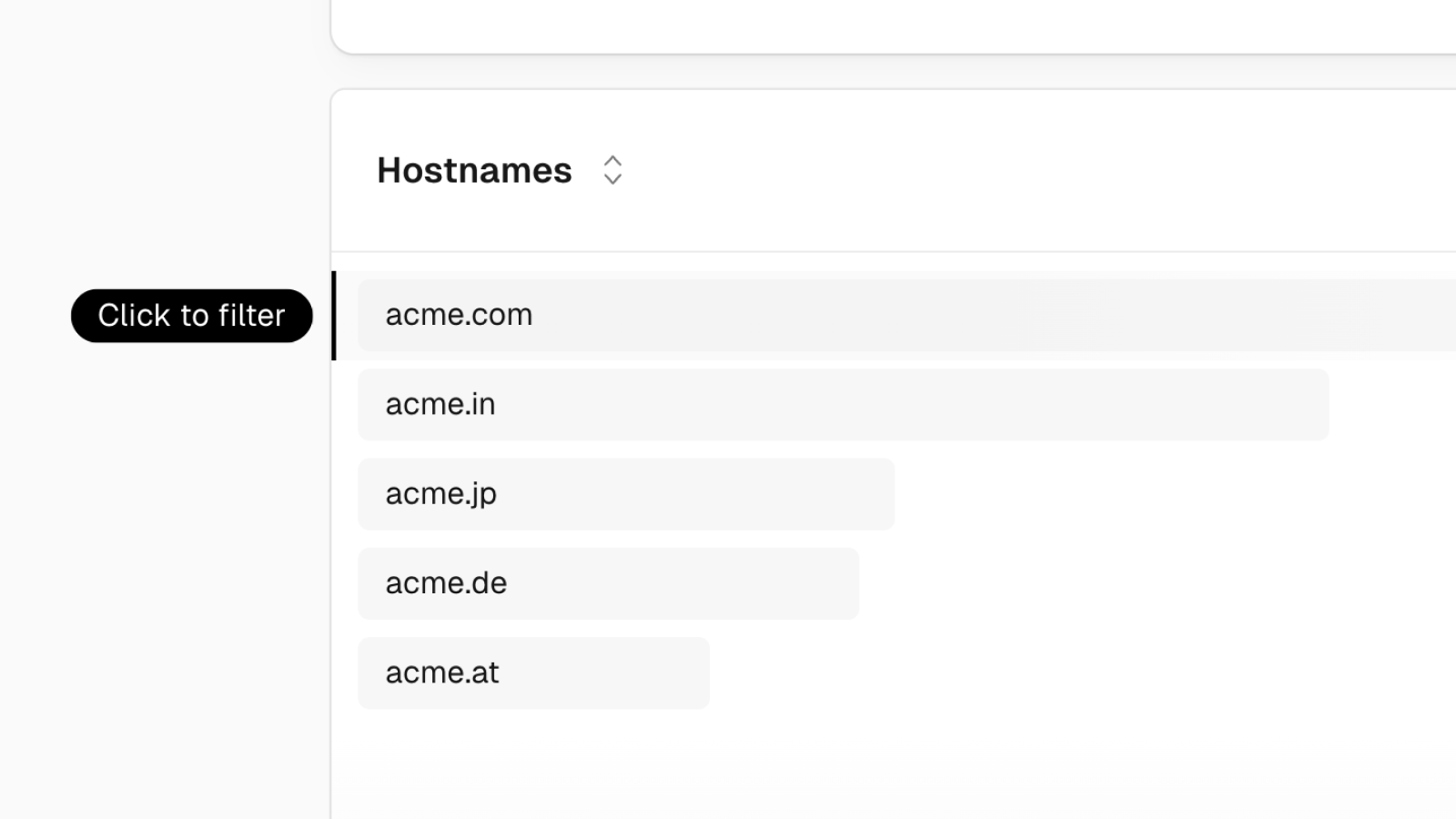

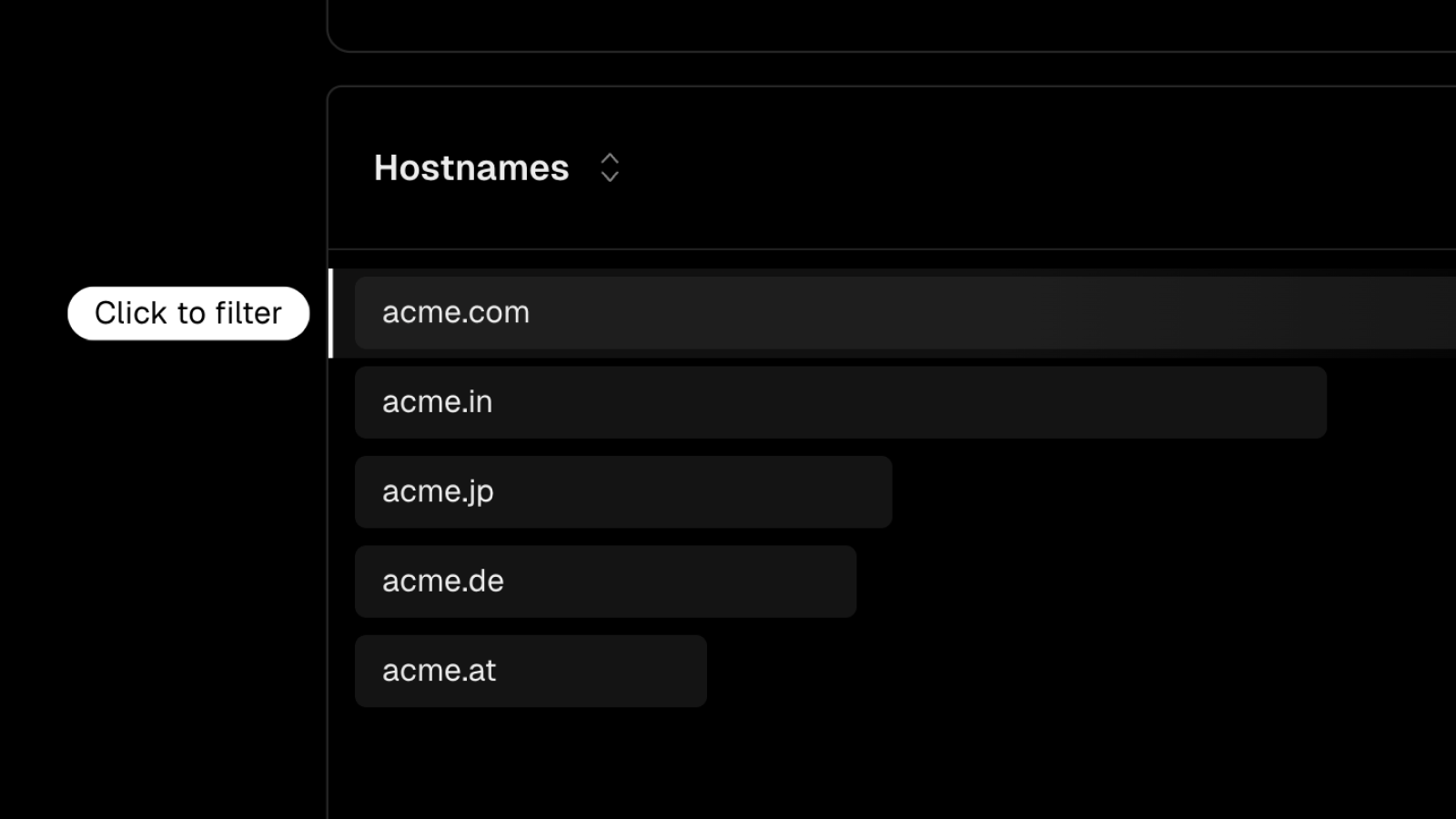

Hostname support in Web Analytics

You can now inspect and filter hostnames in Vercel Web Analytics.

- Domain insights: Analyze traffic by specific domains. This is beneficial for per-country domains, or for building multi-tenant applications.

- Advanced filtering: Apply filters based on hostnames to view page views and custom events per domain.

This feature is available to all Web Analytics customers.

Learn more in our documentation about filtering.

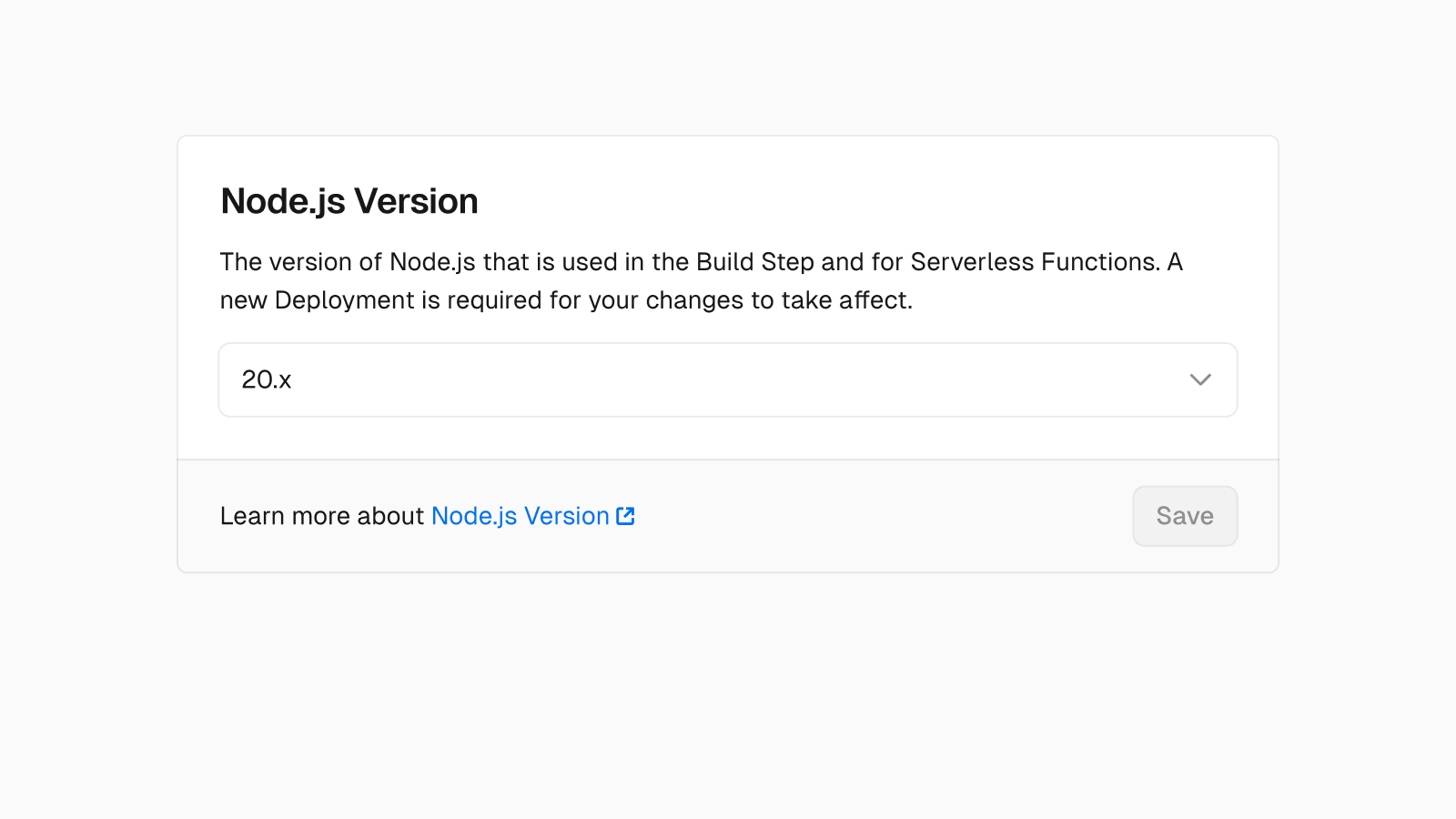

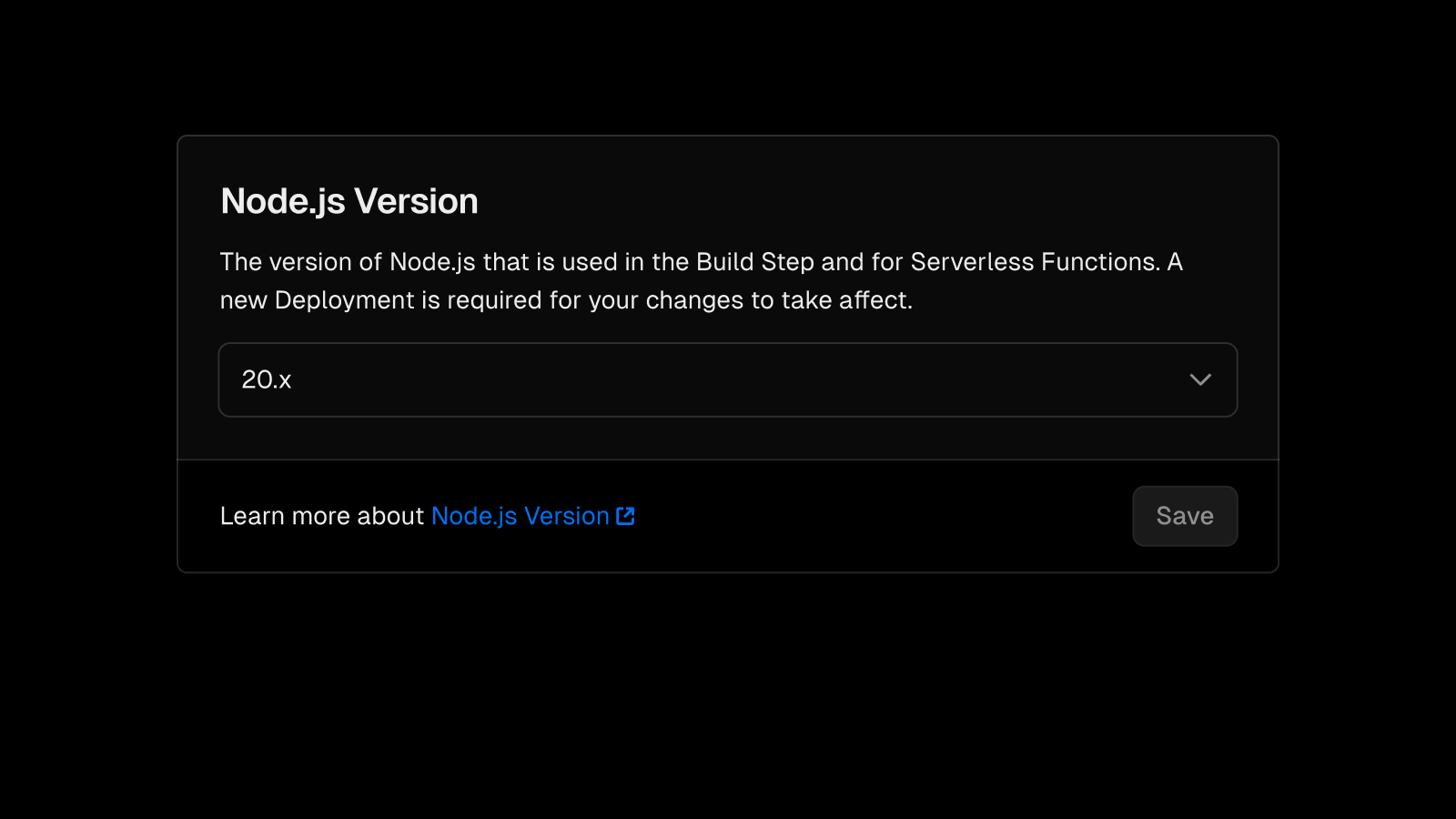

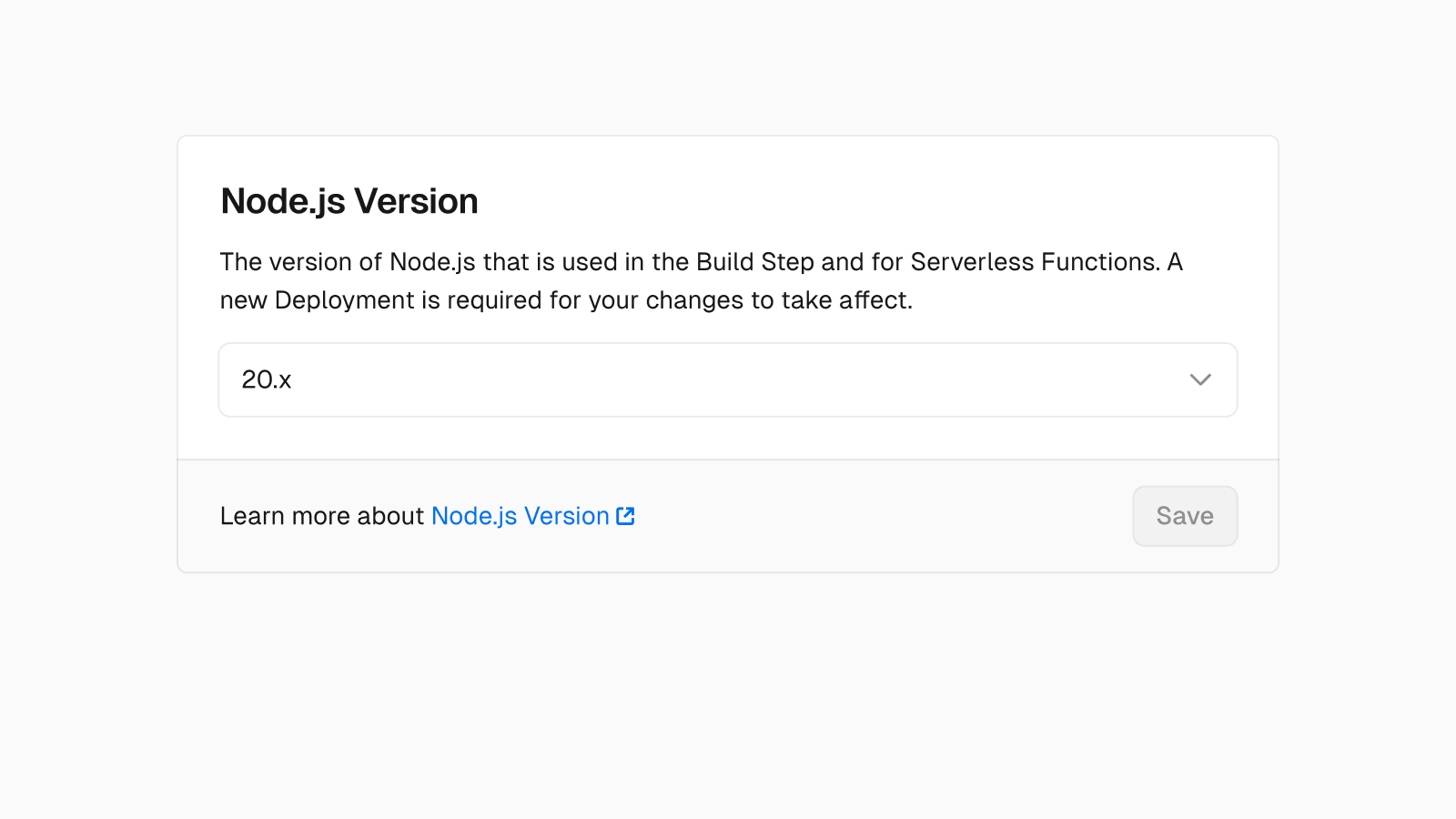

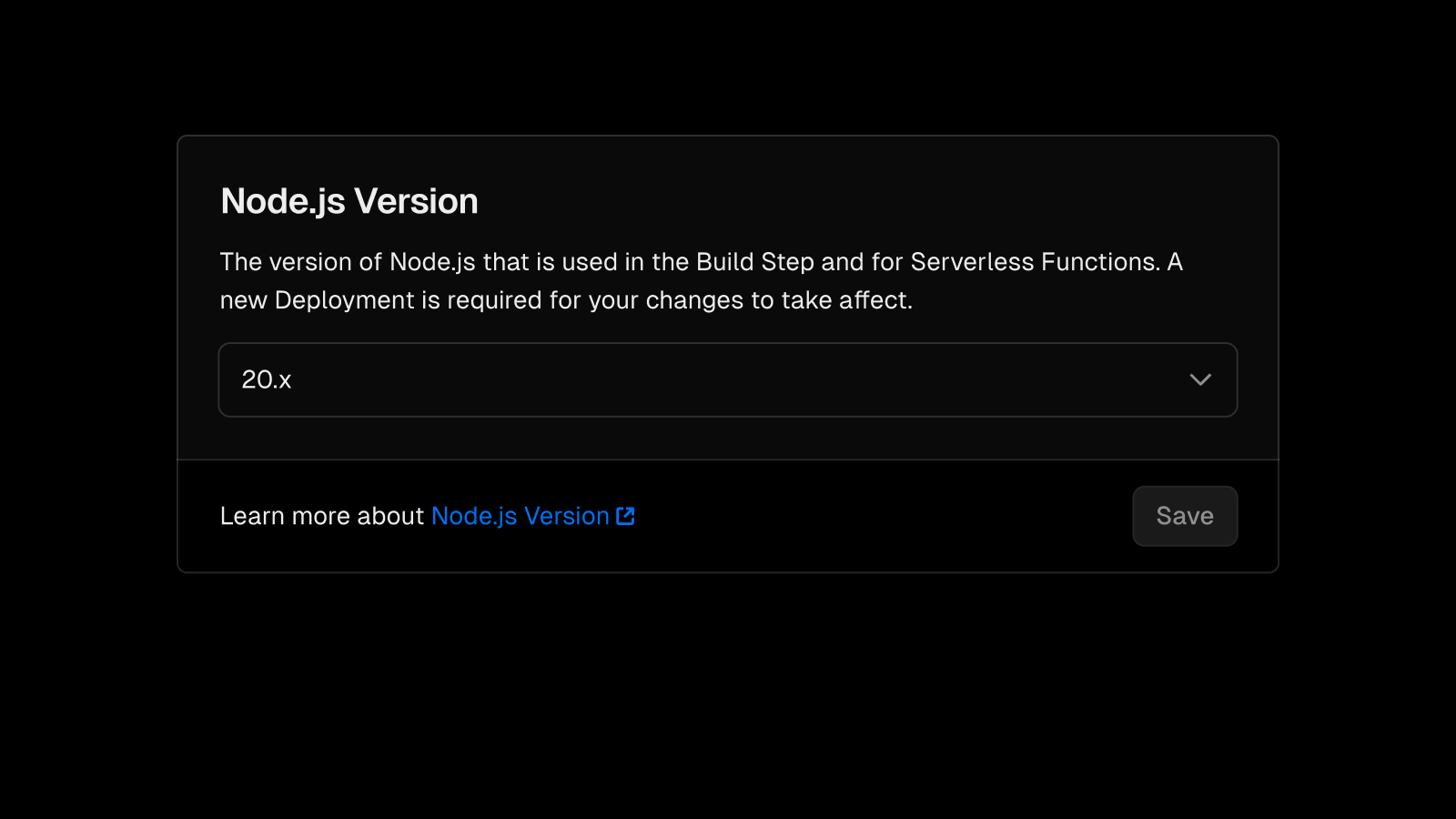

Node.js v20 LTS is now generally available

Node.js 20 is now fully supported for Builds and Vercel Functions. You can select 20.x in the Node.js Version section on the General page in the Project Settings. The default version for new projects is now Node.js 20.

Node.js 20 offers improved performance and introduces new core APIs to reduce the dependency on third-party libraries in your project.

The exact version used by Vercel is 20.11.1 and will automatically update minor and patch releases. Therefore, only the major version (20.x) is guaranteed.

Read the documentation for more.

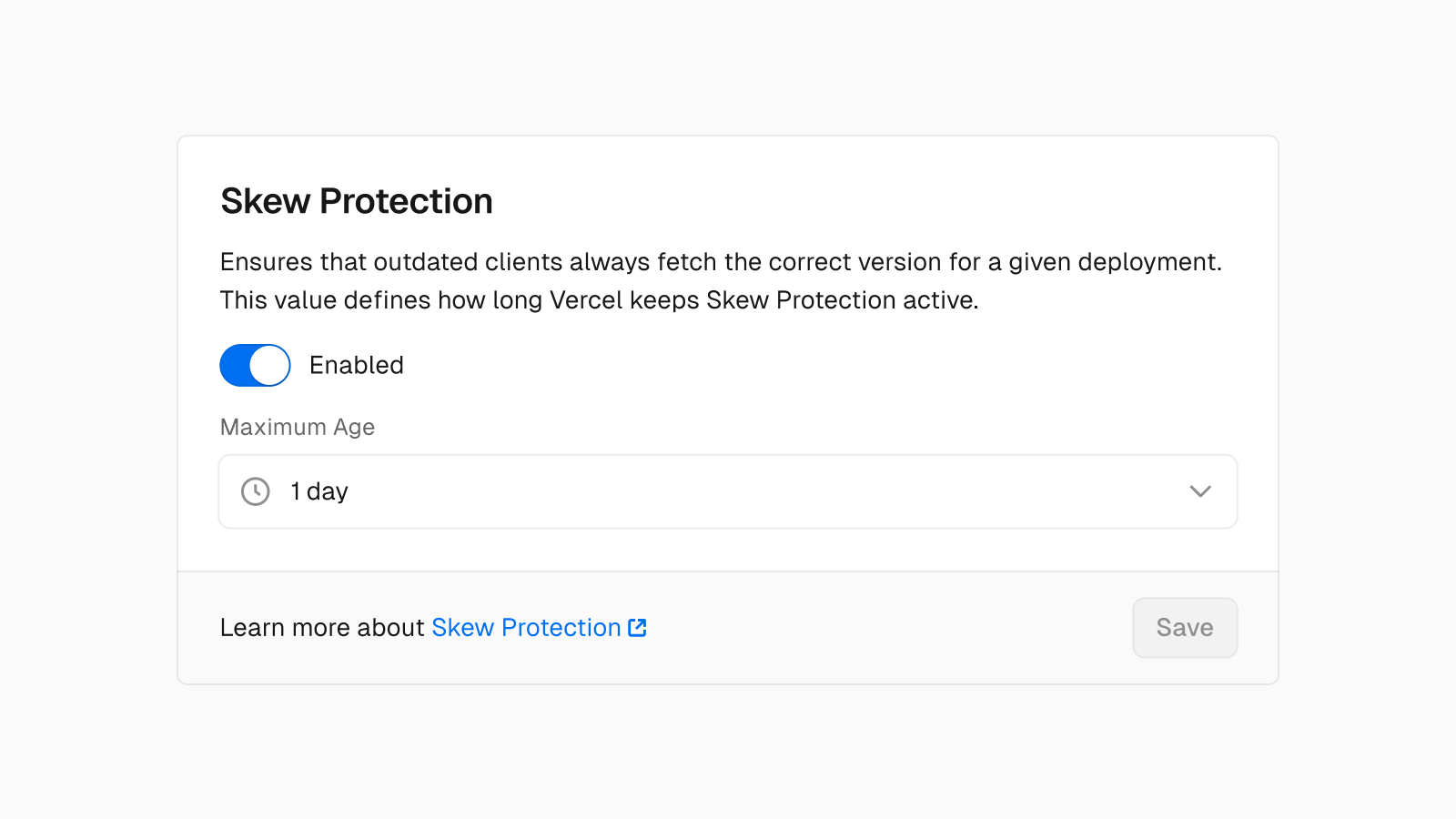

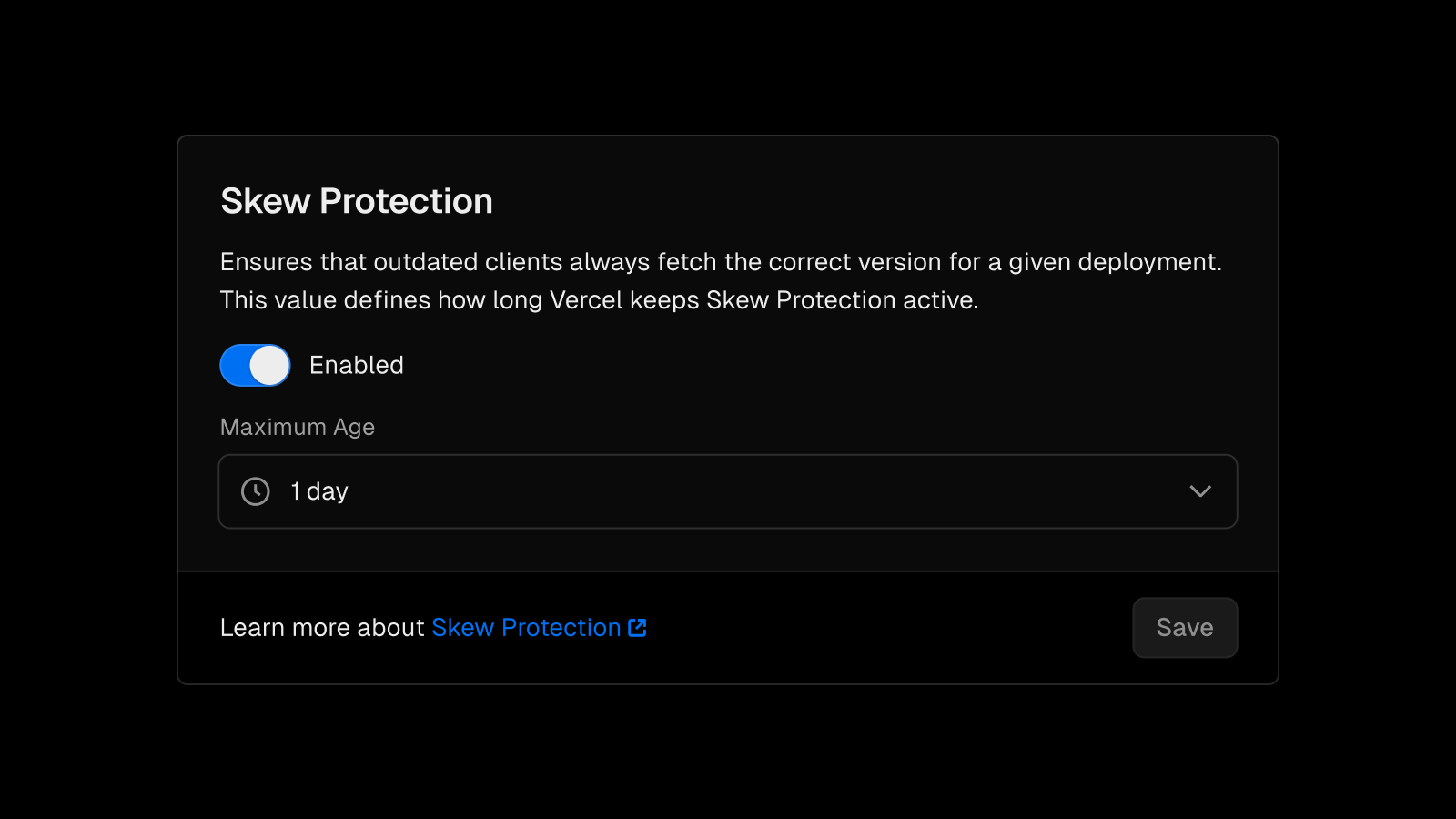

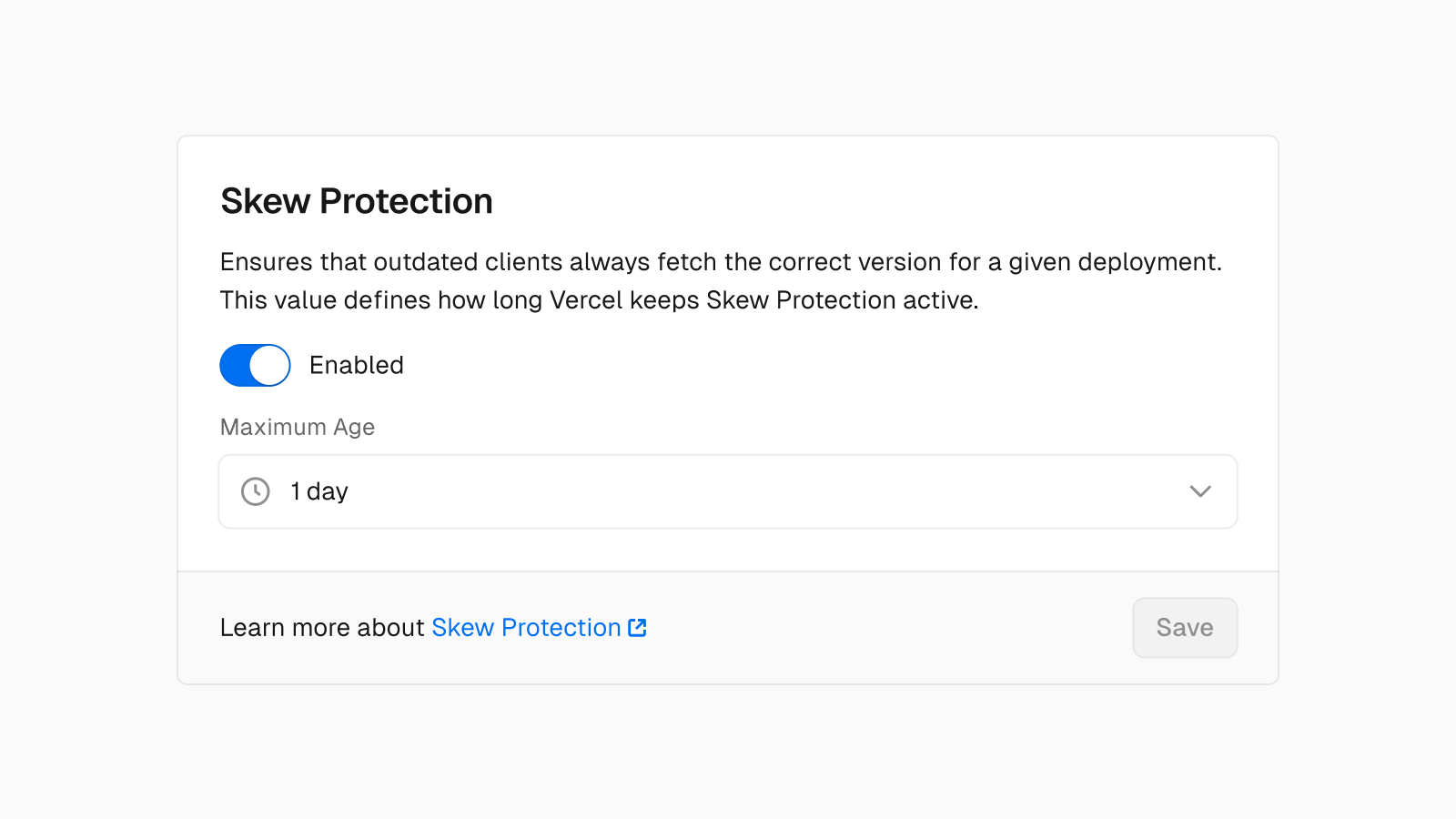

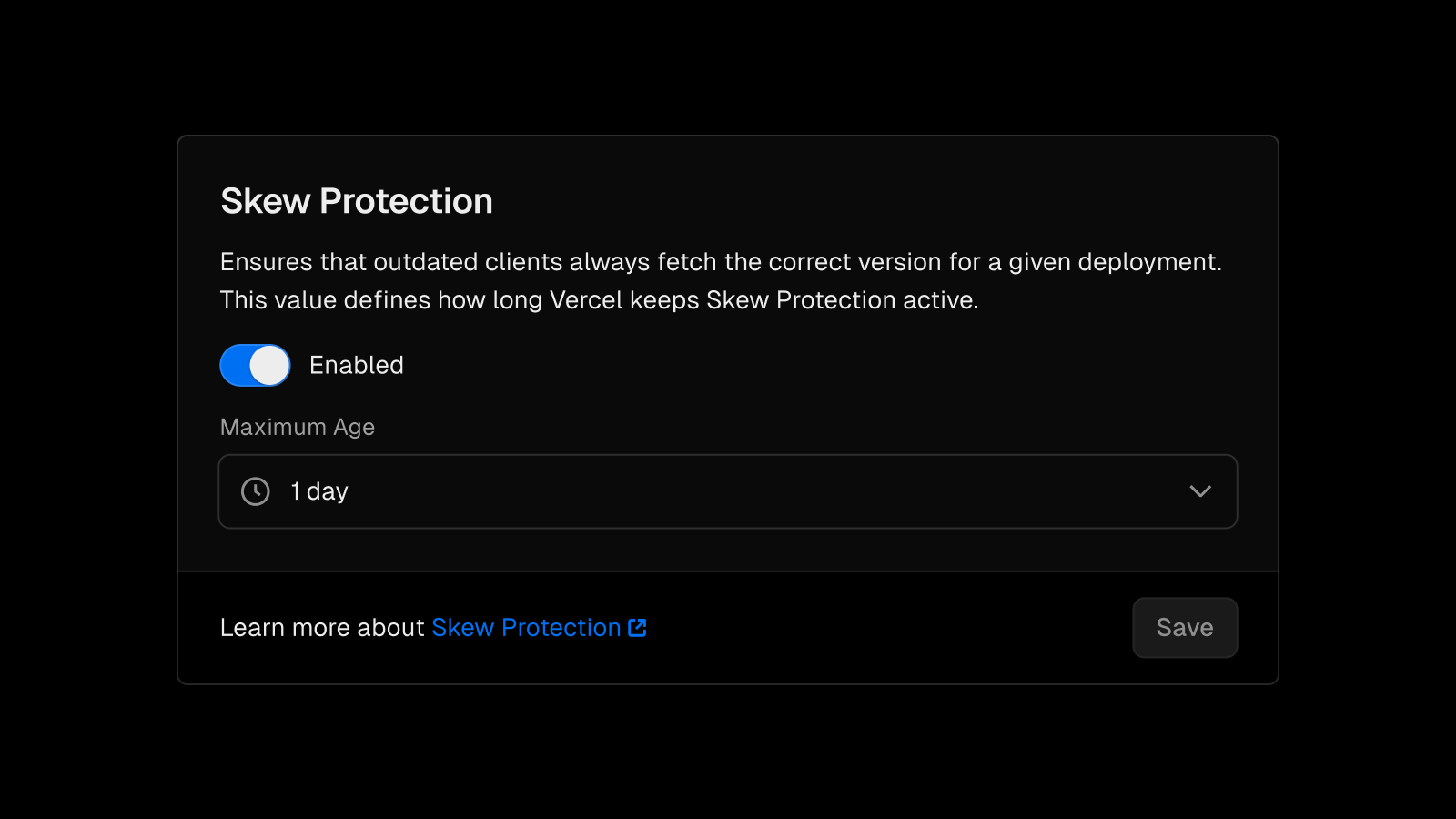

Skew Protection is now generally available

Last year, we introduced Vercel's industry-first Skew Protection mechanism and we're happy to announce it is now generally available.

Skew Protection solves two problems with frontend applications:

- If users try to request assets (like CSS or JavaScript files) in the middle of a deployment, Skew Protection enables truly zero-downtime rollouts and ensures those requests resolve successfully.

- Outdated clients are able to call the correct API endpoints (or React Server Actions) when new server code is published from the latest deployment.

Since the initial release of Skew Protection, we have made the following improvements:

- Skew Protection can now be managed through UI in the advanced Project Settings

- Pro customers now default to 12 hours of protection

- Enterprise customers can get up to 7 days of protection

Skew Protection is now supported in SvelteKit (v5.2.0 of the Vercel adapter), previously supported in Next.js (stable in v14.1.4), and more frameworks soon. Framework authors can view a reference implementation here.

Learn more in the documentation to get started with Skew Protection.

Next.js AI Chatbot 2.0

The Next.js AI Chatbot template has been updated to use AI SDK 3.0 with React Server Components.

We've included Generative UI examples so you can get quickly create rich chat interfaces beyond just plain text. The chatbot has also been upgraded to the latest Next.js App Router and Shadcn UI.

Lastly, we've simplified the default authentication setup by removing the need to create a GitHub OAuth application prior to initial deployment. This will make it faster to deploy and also easier for open source contributors to use Vercel Preview Deployments when they make changes.

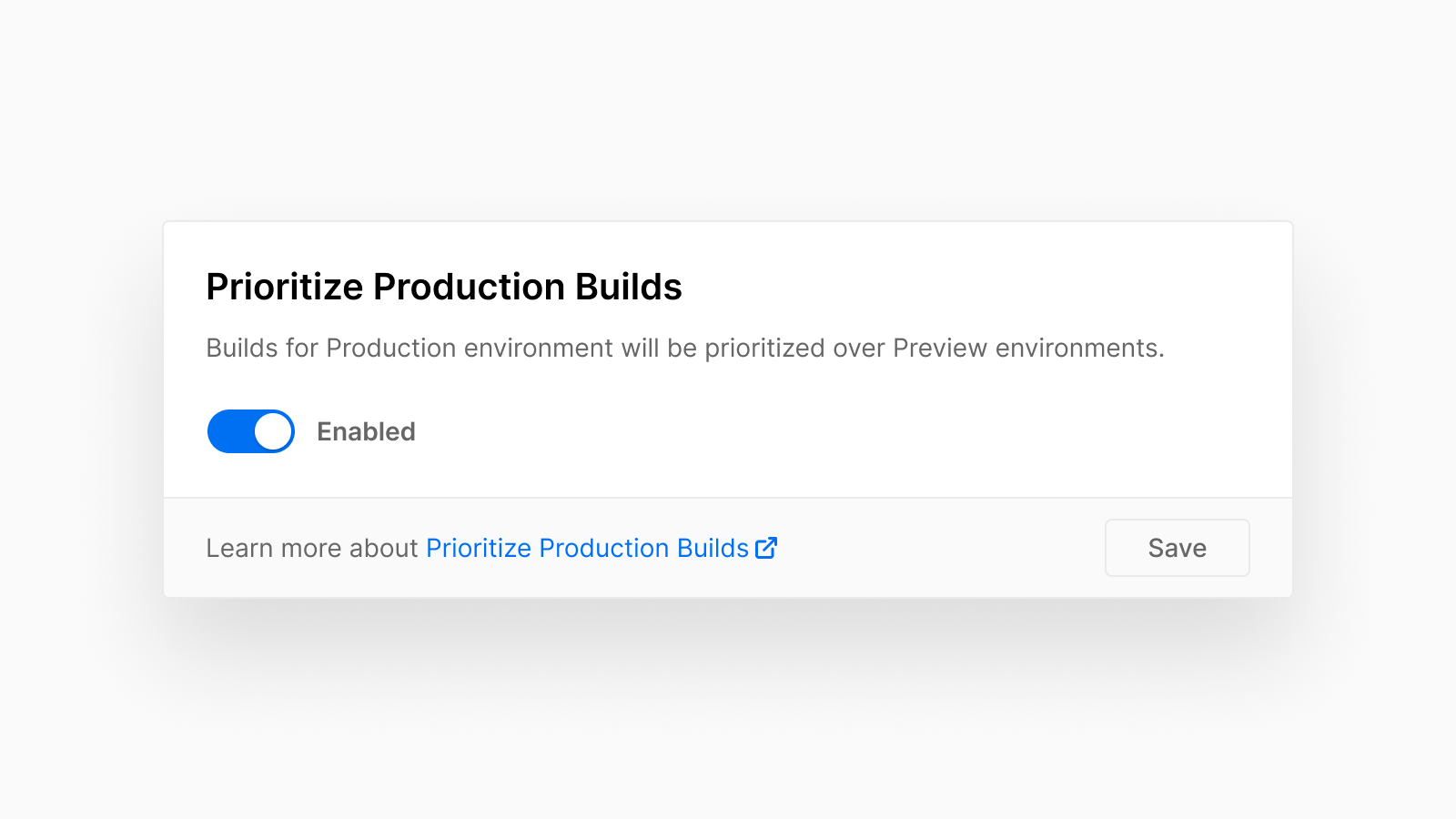

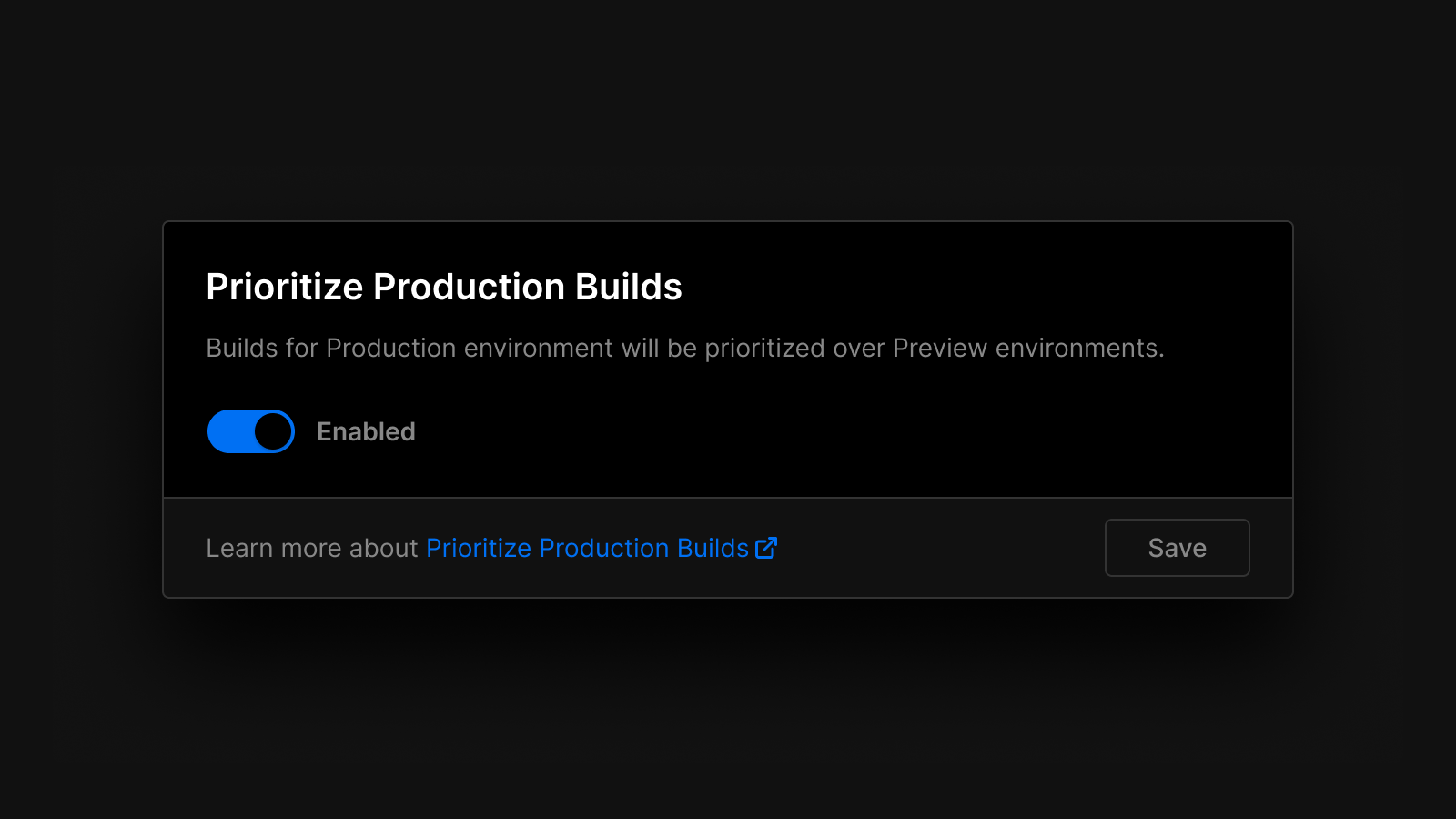

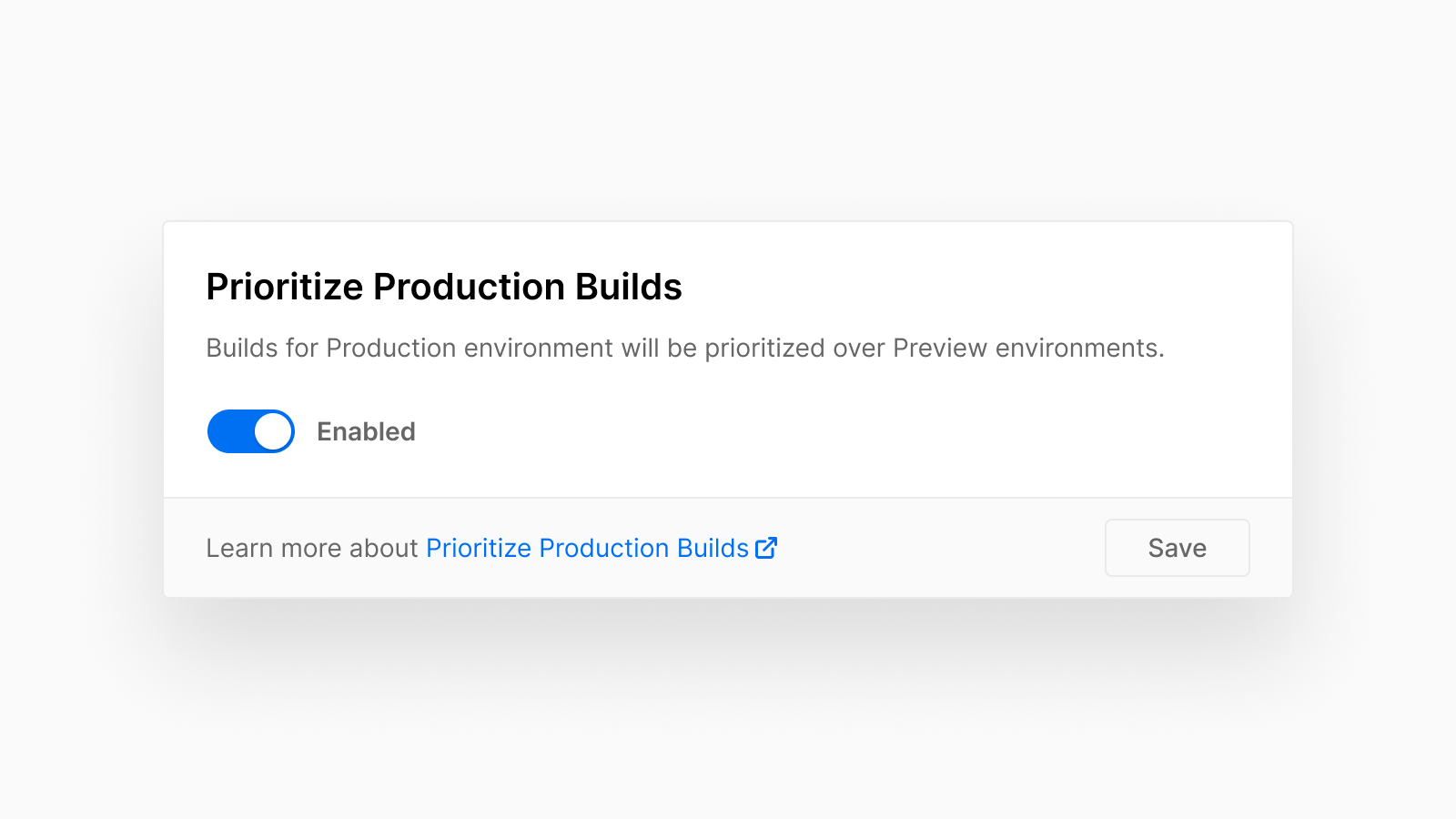

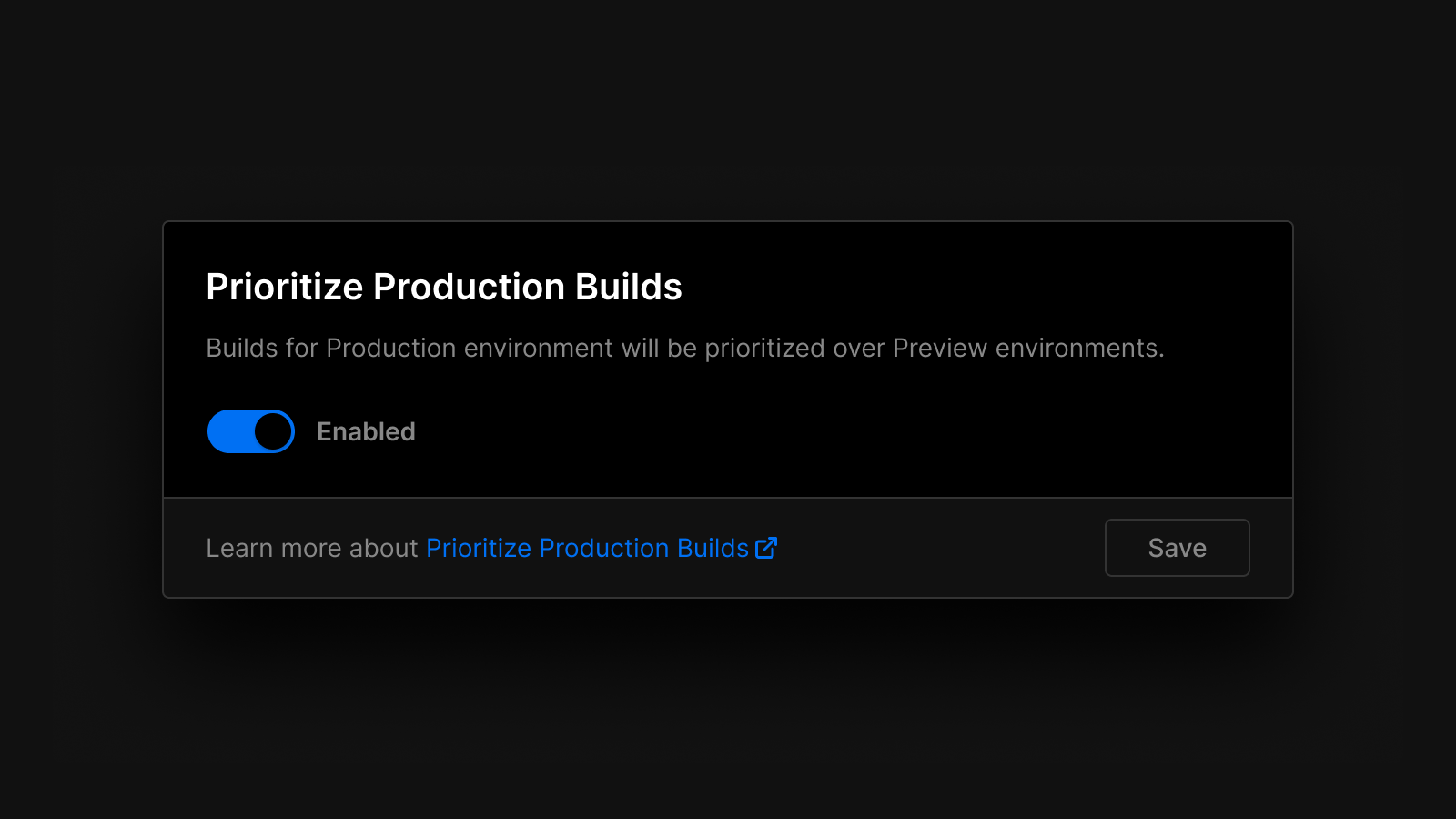

Prioritize production builds available on all plans

To accelerate the production release process, customers on all plans can now prioritize changes to the Production Environment over Preview Deployments.

With this setting configured, any Production Deployment changes will skip the line of queued Preview Deployments and go to the front of the queue.

You can also increase your build concurrency limits to give you the ability to start multiple builds at once. Additionally, Enterprise customers can also contact sales to purchase enhanced build machines with larger memory and storage.

Check out our documentation to learn more.

The default CPU for Vercel Functions will change from Basic (0.6 vCPU/1GB Memory) to Standard (1 vCPU/1.7GB Memory) for new projects created after May 6th, 2024. Existing projects will remain unchanged unless manually updated.

This change helps ensure consistent function performance and faster startup times. Depending on your function code size, this may reduce cold starts by a few hundred milliseconds.

While increasing the function CPU can increase costs for the same duration, it can also make functions execute faster. If functions execute faster, you incur less overall function duration usage. This is especially important if your function runs CPU-intensive tasks.

This change will be applied to all paid plan customers (Pro and Enterprise), no action required.

Check out our documentation to learn more.

Earlier this month, we announced our improved infrastructure pricing, which is active for new customers starting today.

Billing for existing customers begins between June 25 and July 24. For more details, please reference the email with next steps sent to your account. Existing Enterprise contracts are unaffected.

Our previous combined metrics (bandwidth and functions) are now more granular, and have reduced base prices. These new metrics can be viewed and optimized from our improved Usage page.

These pricing improvements build on recent platform features to help automatically prevent runaway spend, including hard spend limits, recursion protection, improved function defaults, Attack Challenge Mode, and more.

It’s now easier to join a team on Vercel. New team members no longer need to re-enter their email, or create a Hobby team or Pro trial. Team invite emails now lead to a sign up page customized for the team. This includes simplified sign up options that reflect the team's SSO settings.

You can invite new team members under "Members" in your team settings. Learn more about managing team members in the documentation.

You can now get AI-assisted answers to your questions directly from the Vercel docs search:

- Use natural language to ask questions about the docs

- View recent search queries and continue conversations

- Easily copy code and markdown output

- Leave feedback to help us improve the quality of responses

Start searching with ⌘K (or Ctrl+K on Windows) menu on vercel.com/docs.

The Gemini AI Chatbot template is a streaming-enabled, Generative UI starter application. It's built with the Vercel AI SDK, Next.js App Router, and React Server Components & Server Actions.

This template features persistent chat history, rate limiting to prevent abuse, session storage, user authentication, and more.

The Gemini model used is models/gemini-1.0-pro-001, however, the Vercel AI SDK enables exploring an LLM provider (like OpenAI, Anthropic, Cohere, Hugging Face, or using LangChain) with just a few lines of code.

You can now inspect and filter hostnames in Vercel Web Analytics.

- Domain insights: Analyze traffic by specific domains. This is beneficial for per-country domains, or for building multi-tenant applications.

- Advanced filtering: Apply filters based on hostnames to view page views and custom events per domain.

This feature is available to all Web Analytics customers.

Learn more in our documentation about filtering.

Node.js 20 is now fully supported for Builds and Vercel Functions. You can select 20.x in the Node.js Version section on the General page in the Project Settings. The default version for new projects is now Node.js 20.

Node.js 20 offers improved performance and introduces new core APIs to reduce the dependency on third-party libraries in your project.

The exact version used by Vercel is 20.11.1 and will automatically update minor and patch releases. Therefore, only the major version (20.x) is guaranteed.

Read the documentation for more.

Last year, we introduced Vercel's industry-first Skew Protection mechanism and we're happy to announce it is now generally available.

Skew Protection solves two problems with frontend applications:

- If users try to request assets (like CSS or JavaScript files) in the middle of a deployment, Skew Protection enables truly zero-downtime rollouts and ensures those requests resolve successfully.

- Outdated clients are able to call the correct API endpoints (or React Server Actions) when new server code is published from the latest deployment.

Since the initial release of Skew Protection, we have made the following improvements:

- Skew Protection can now be managed through UI in the advanced Project Settings

- Pro customers now default to 12 hours of protection

- Enterprise customers can get up to 7 days of protection

Skew Protection is now supported in SvelteKit (v5.2.0 of the Vercel adapter), previously supported in Next.js (stable in v14.1.4), and more frameworks soon. Framework authors can view a reference implementation here.

Learn more in the documentation to get started with Skew Protection.

The Next.js AI Chatbot template has been updated to use AI SDK 3.0 with React Server Components.

We've included Generative UI examples so you can get quickly create rich chat interfaces beyond just plain text. The chatbot has also been upgraded to the latest Next.js App Router and Shadcn UI.

Lastly, we've simplified the default authentication setup by removing the need to create a GitHub OAuth application prior to initial deployment. This will make it faster to deploy and also easier for open source contributors to use Vercel Preview Deployments when they make changes.

To accelerate the production release process, customers on all plans can now prioritize changes to the Production Environment over Preview Deployments.

With this setting configured, any Production Deployment changes will skip the line of queued Preview Deployments and go to the front of the queue.

You can also increase your build concurrency limits to give you the ability to start multiple builds at once. Additionally, Enterprise customers can also contact sales to purchase enhanced build machines with larger memory and storage.

Check out our documentation to learn more.